How to Build an AI App

Complete guide • Tutorials • Best practices

AI App Development Journey:

Start BuildingBuilding an AI app involves multiple phases: planning, data preparation, model selection, development, testing, and deployment. Success requires understanding both AI concepts and software development principles.

Key phases of AI app development:

- Planning: Define problem, requirements, and success metrics

- Data: Collect, clean, and prepare training data

- Model: Select and train appropriate AI models

- Development: Build the application interface and backend

- Testing: Validate performance and accuracy

- Deployment: Launch and monitor in production

Popular frameworks include TensorFlow, PyTorch, Hugging Face, and cloud AI services. The choice depends on your specific requirements, technical expertise, and deployment goals.

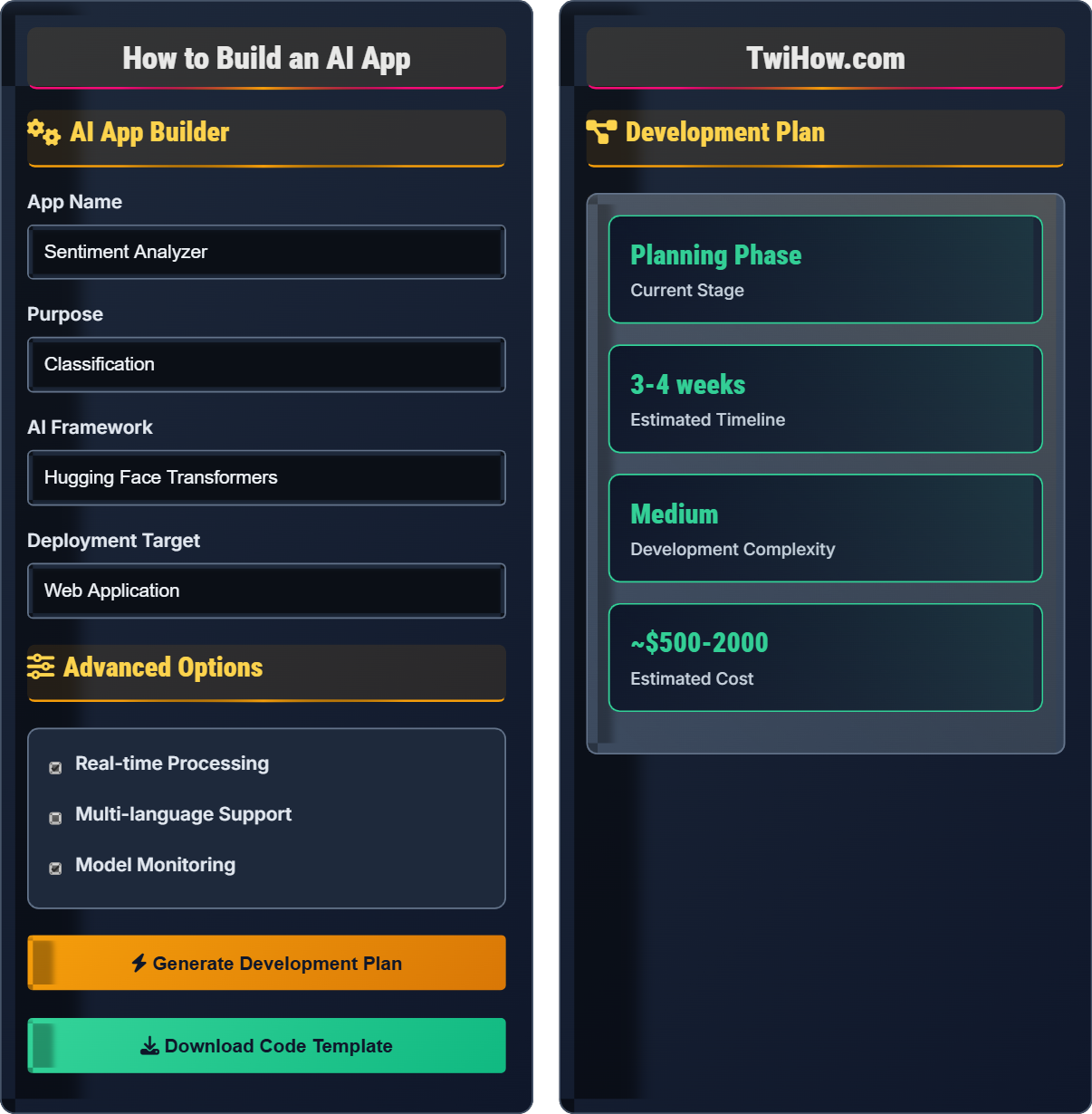

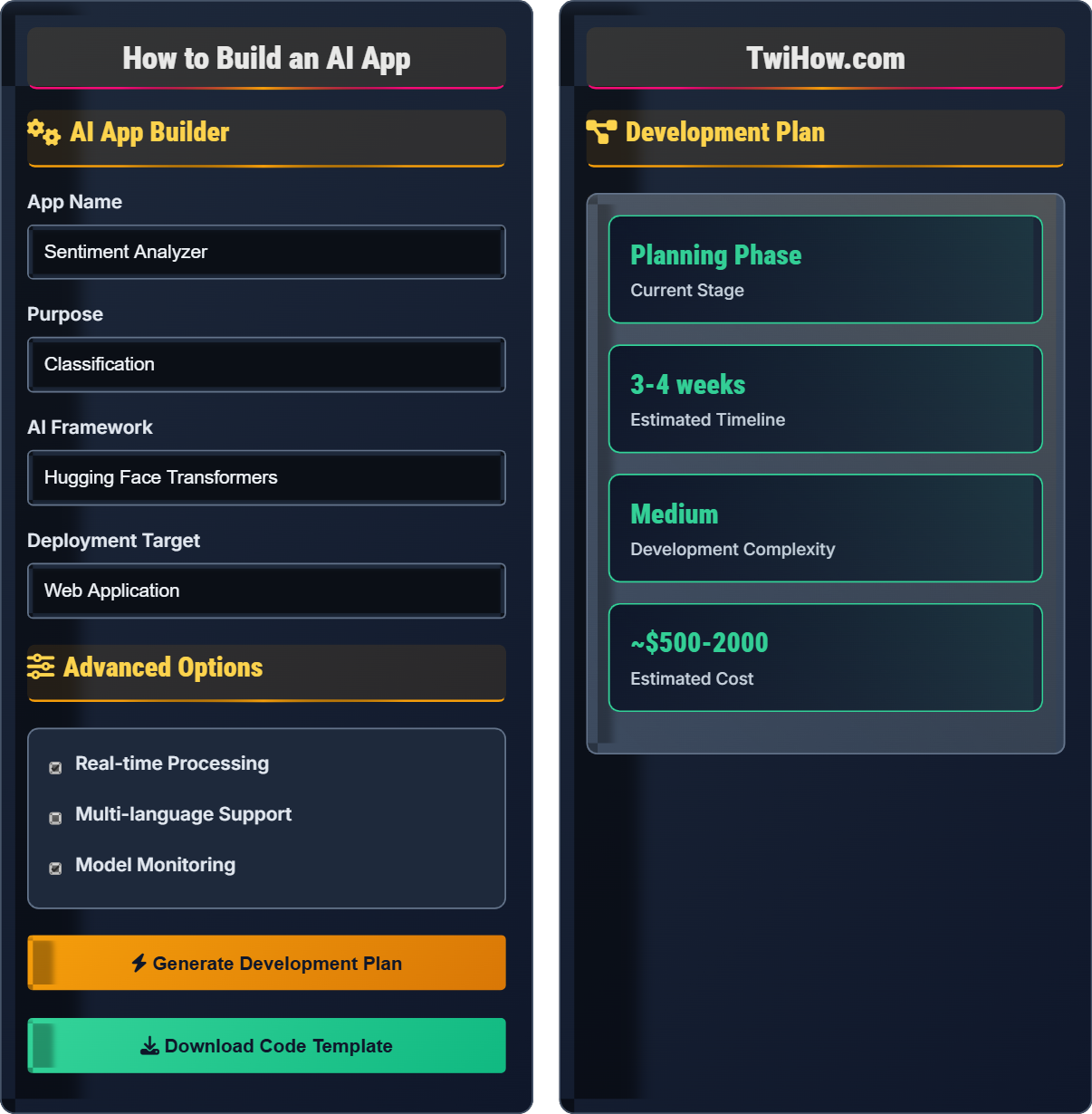

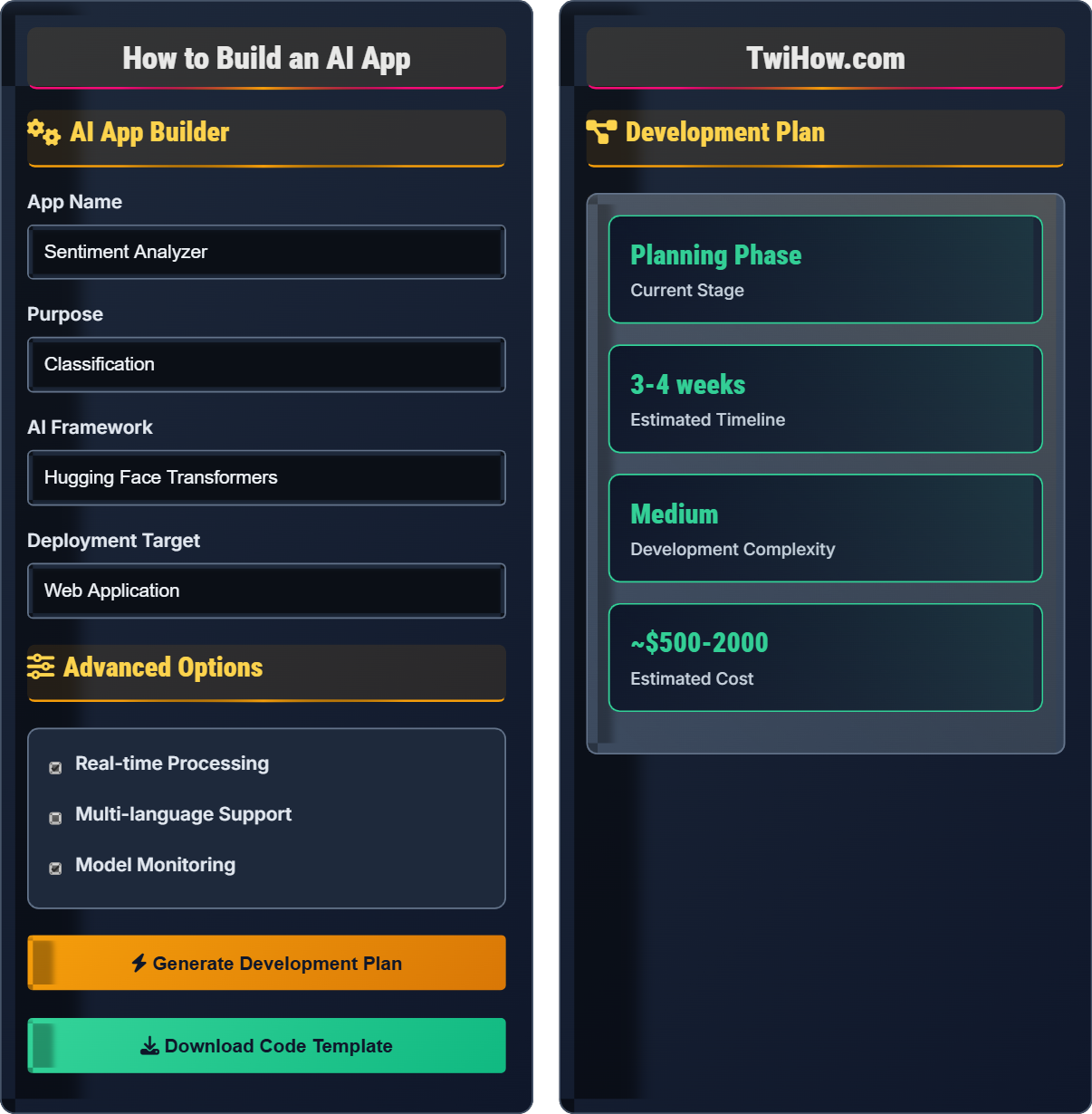

AI App Builder

Advanced Options

Development Plan

- Project Setup: Initialize repository, install dependencies, configure environment

- Data Preparation: Collect, clean, and preprocess training data

- Model Selection: Choose appropriate model architecture for sentiment analysis

- Training Pipeline: Implement training loop with validation

- Model Evaluation: Test accuracy and performance metrics

- API Development: Create RESTful endpoints for inference

- Frontend Creation: Build user interface for input/output

- Testing: Unit tests, integration tests, and user acceptance

- Deployment: Deploy to chosen platform with monitoring

- Maintenance: Monitor performance and update model as needed

Development Phases

Define your AI app's purpose, target audience, and success metrics. Identify the problem you're solving and the value your app will provide.

- Problem statement and objectives

- User personas and use cases

- Success metrics and KPIs

- Technical requirements document

- Project timeline and milestones

Gather, clean, and prepare the data needed to train your AI model. This is often the most time-consuming phase of AI development.

- Pandas for data manipulation

- NumPy for numerical operations

- Scikit-learn for preprocessing

- Data visualization libraries

- Labeling tools for annotations

Select, train, and validate your AI model. Experiment with different architectures and hyperparameters to optimize performance.

- TensorFlow/Keras for deep learning

- PyTorch for research and flexibility

- Hugging Face for NLP tasks

- Scikit-learn for traditional ML

- Cloud AI services for managed solutions

Build the application interface and integrate the trained model. Focus on user experience and efficient model serving.

- REST APIs for web integration

- SDKs for mobile apps

- Real-time inference pipelines

- Batch processing systems

- Edge deployment solutions

AI Frameworks Comparison

Consider these factors:

- Experience Level: Beginners might prefer Hugging Face or OpenAI API

- Performance Needs: TensorFlow for production, PyTorch for research

- Data Size: Cloud APIs for small projects, local frameworks for large datasets

- Deployment Target: Mobile deployment requires specific frameworks

- Customization Needs: Custom models require full frameworks

Deployment Strategies

API Testing

AI App Development Quiz

Which phase of AI app development typically requires the most time and effort?

Data collection and preparation typically consumes 60-80% of the total development time. This phase involves gathering, cleaning, labeling, and validating data, which is crucial for model performance. Without quality data, even the most sophisticated models will fail.

The answer is B) Data collection and preparation.

Many beginners underestimate the importance of data preparation in AI development. The "garbage in, garbage out" principle applies strongly to AI - model quality is directly tied to data quality. This phase requires domain expertise, data cleaning skills, and often manual annotation efforts.

Data Preprocessing: Cleaning and transforming raw data for model training

Data Quality: Accuracy, completeness, and consistency of data

Feature Engineering: Creating meaningful input variables for models

• Data quality determines model quality

• Plan for 60-80% time investment

• Validate data thoroughly

• Automate data cleaning when possible

• Document your data pipeline

• Validate with domain experts

• Rushing through data preparation

• Not validating data quality

• Insufficient data quantity

Compare TensorFlow and PyTorch for AI app development. When should you choose one over the other?

TensorFlow: Production-focused, static computation graphs, excellent deployment tools, Google-backed. Best for: Production deployment, mobile apps, production systems requiring stability.

PyTorch: Research-focused, dynamic computation graphs, Pythonic, Facebook-backed. Best for: Research, experimentation, rapid prototyping, debugging.

Choose TensorFlow when: Building production systems, deploying to mobile, needing stable, well-tested implementations.

Choose PyTorch when: Prototyping, research, needing flexibility, debugging complex models.

Hybrid Approach: Use PyTorch for development/research, convert to TensorFlow for deployment.

The TensorFlow vs PyTorch debate reflects the tension between research flexibility and production stability. Both frameworks have matured significantly, but they still retain their original design philosophies. The choice often depends on your project's stage and requirements.

Static Graph: Computation graph defined before execution

Dynamic Graph: Computation graph built during execution

Production-Ready: Stable, optimized for deployment

• Match framework to project phase

• Consider team expertise

• Evaluate deployment requirements

• Learn both frameworks

• Consider Keras for simplicity

• Look at community support

• Choosing based on popularity only

• Not considering deployment needs

• Ignoring team expertise

You're tasked with building an AI app that analyzes customer reviews for sentiment. The app needs to process 10,000 reviews per day with 95% accuracy. Plan the technical architecture, considering data requirements, model choice, infrastructure, and estimated timeline.

Data Requirements: 10,000+ labeled reviews for training, balanced positive/negative sentiment distribution. Include domain-specific language if applicable.

Model Choice: Pre-trained BERT or RoBERTa model fine-tuned on review data. Provides good accuracy with reasonable training time.

Infrastructure: Cloud-based API service (AWS/GCP) with auto-scaling to handle daily load. Redis for caching frequent queries.

Architecture: REST API with asynchronous processing for bulk uploads, real-time processing for individual queries.

Timeline: 4-6 weeks (2 weeks data prep, 1 week model training, 1 week app dev, 1 week testing/deployment).

Monitoring: Accuracy tracking, response time metrics, error logging.

This example demonstrates the importance of considering all aspects of AI app development: data requirements, model selection, infrastructure planning, and operational concerns. Successful AI apps require careful planning of both technical and operational aspects.

Pre-trained Model: Model trained on general data, fine-tuned for specific task

Auto-scaling: Automatic adjustment of resources based on demand

Asynchronous Processing: Non-blocking operations for better performance

• Plan for scale from beginning

• Consider data requirements early

• Design for monitoring

• Use cloud managed services

• Implement caching strategies

• Plan for error handling

• Underestimating data needs

• Not planning for scale

• Ignoring monitoring needs

Your trained AI model achieves 98% accuracy in testing but performs poorly in production with only 70% accuracy. Identify possible causes and solutions for this deployment problem.

Possible Causes:

1. Data Drift: Production data differs from training data distribution

2. Concept Drift: Relationships between features and target change over time

3. Preprocessing Mismatch: Different data preprocessing in production

4. Feature Engineering Issues: Features unavailable or computed differently in production

5. System Integration Problems: Model inputs corrupted during API calls

Solutions:

• Implement data drift detection and alerts

• Retrain model periodically with fresh data

• Ensure identical preprocessing in training and production

• Monitor feature distributions and model inputs

• Implement comprehensive logging and error tracking

The "accuracy paradox" is common in AI deployment: models perform well in controlled testing environments but fail in production. This highlights the importance of considering the entire ML lifecycle, not just model training. Operational concerns like data consistency, monitoring, and model maintenance are equally important.

Data Drift: Change in input data distribution over time

Concept Drift: Change in relationship between inputs and outputs

Model Lifecycle: End-to-end process from training to production

• Test in production-like environments

• Monitor continuously

• Plan for model updates

• Shadow model deployment

• A/B testing for model changes

• Automated retraining pipelines

• Ignoring production monitoring

• Not planning for model updates

• Inadequate testing environments

What is the most critical ethical consideration when building an AI app for loan approvals?

Algorithmic fairness is critical in loan approval systems because biased models can perpetuate discrimination and deny fair access to credit. Financial AI systems must ensure equal treatment regardless of protected characteristics like race, gender, or ethnicity. Regulatory compliance (like fair lending laws) also mandates bias prevention.

The answer is B) Algorithmic fairness and bias prevention.

Ethical considerations in AI development are not optional - they're essential, especially in high-stakes applications like finance, healthcare, and criminal justice. Developers must proactively address fairness, transparency, and accountability to prevent harm and ensure responsible AI deployment.

Algorithmic Fairness: Equal treatment regardless of protected attributes

Protected Attributes: Characteristics like race, gender, age

Responsible AI: Ethical development and deployment practices

• Test for bias during development

• Ensure regulatory compliance

• Consider societal impact

• Diverse development teams

• Bias detection tools

• External audits

• Ignoring ethical implications

• Not testing for bias

• Lack of oversight

FAQ

Q: Do I need a lot of math knowledge to build AI apps?

A: For building AI apps, you don't need deep mathematical expertise to start. Modern frameworks like Hugging Face and TensorFlow provide high-level APIs that abstract away complex math. However, understanding basic concepts like gradients, loss functions, and overfitting helps with debugging and optimization. As you advance, deeper mathematical knowledge becomes more valuable for custom architectures and research applications.

Q: How long does it take to build a simple AI app?

A: A simple AI app (like sentiment analysis) can be built in 1-2 weeks with basic functionality. However, a production-ready app with proper error handling, user interface, and monitoring takes 1-3 months. The timeline depends on your experience, data availability, and requirements complexity. Start with a minimal viable product and iterate based on user feedback.