Is AI Safe or Dangerous?

Complete safety analysis • Risk assessment • Expert opinions

AI Safety Balance:

Assess Risks NowAI safety is nuanced: current AI systems are generally safe with proper safeguards, but pose risks related to bias, privacy, and misuse. Advanced AI systems may present existential risks if not developed responsibly. The key is implementing appropriate safety measures at every level.

Current AI risks include:

- Bias & Discrimination: Perpetuating societal inequalities

- Privacy Violations: Unauthorized data collection and use

- Security Vulnerabilities: Exploitation by malicious actors

- Job Displacement: Economic disruption from automation

- Decision Transparency: Unexplainable AI decisions

Benefits include improved healthcare, scientific discoveries, and efficiency gains. Responsible development and regulation are essential for maximizing benefits while minimizing risks.

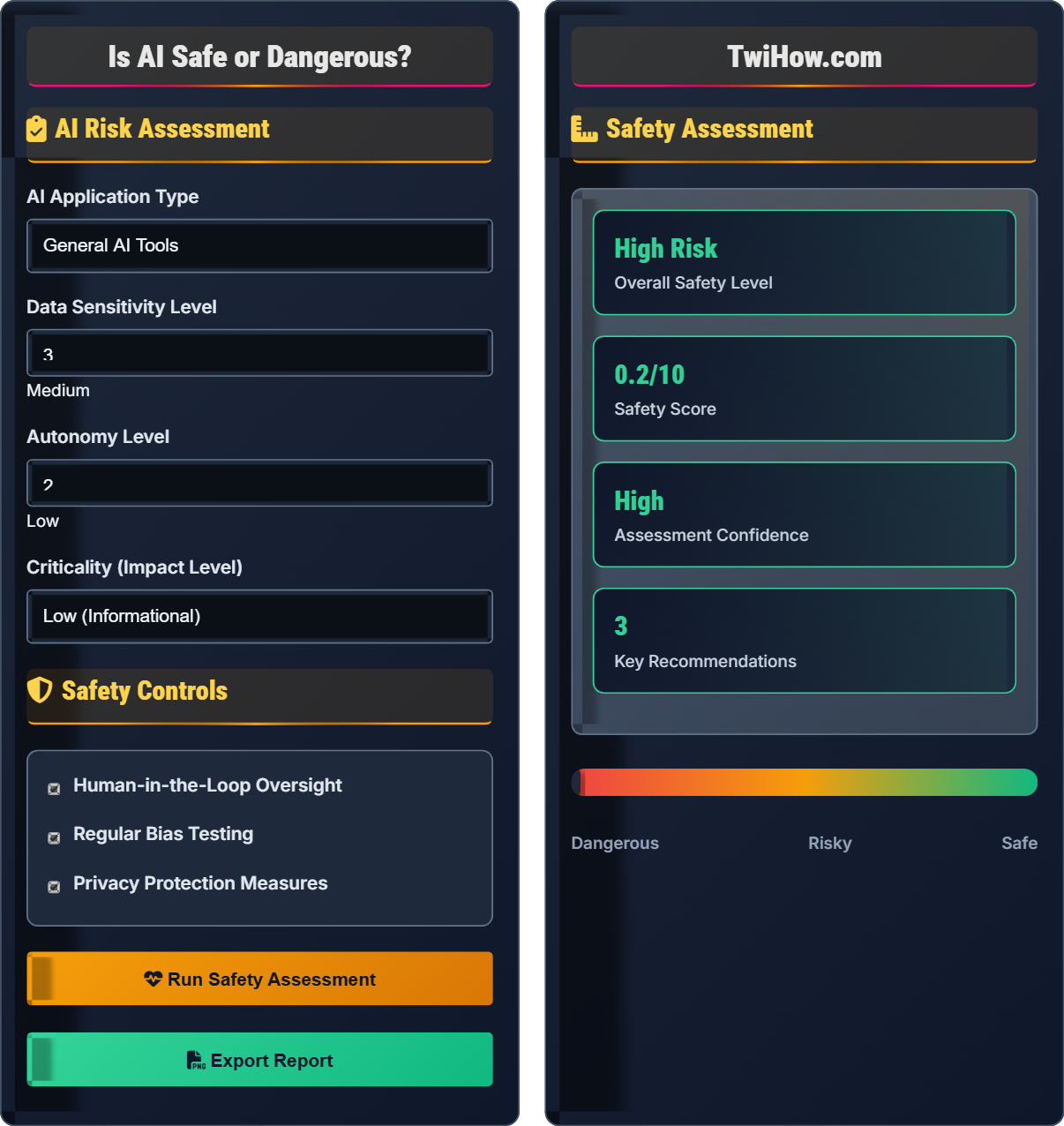

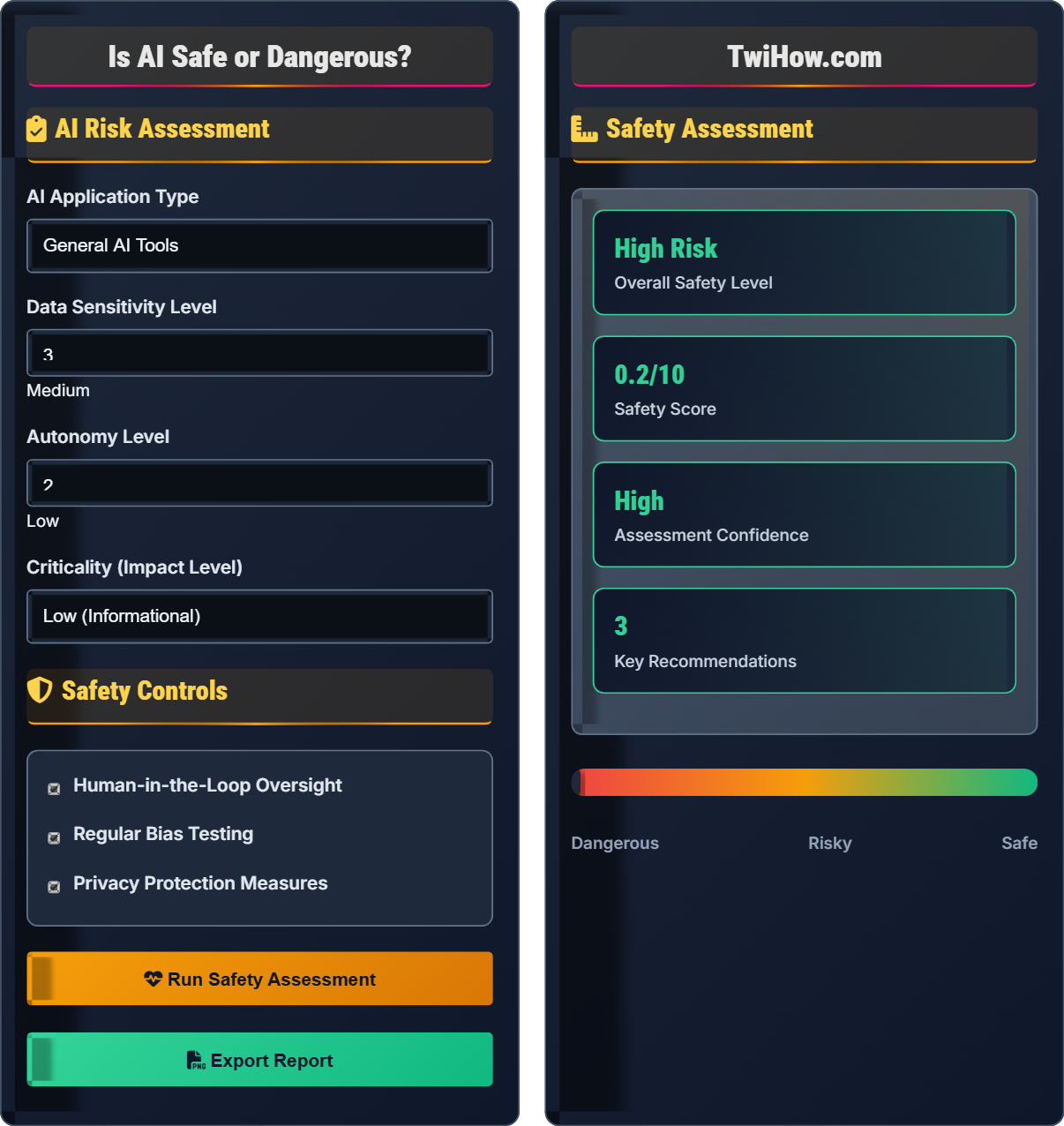

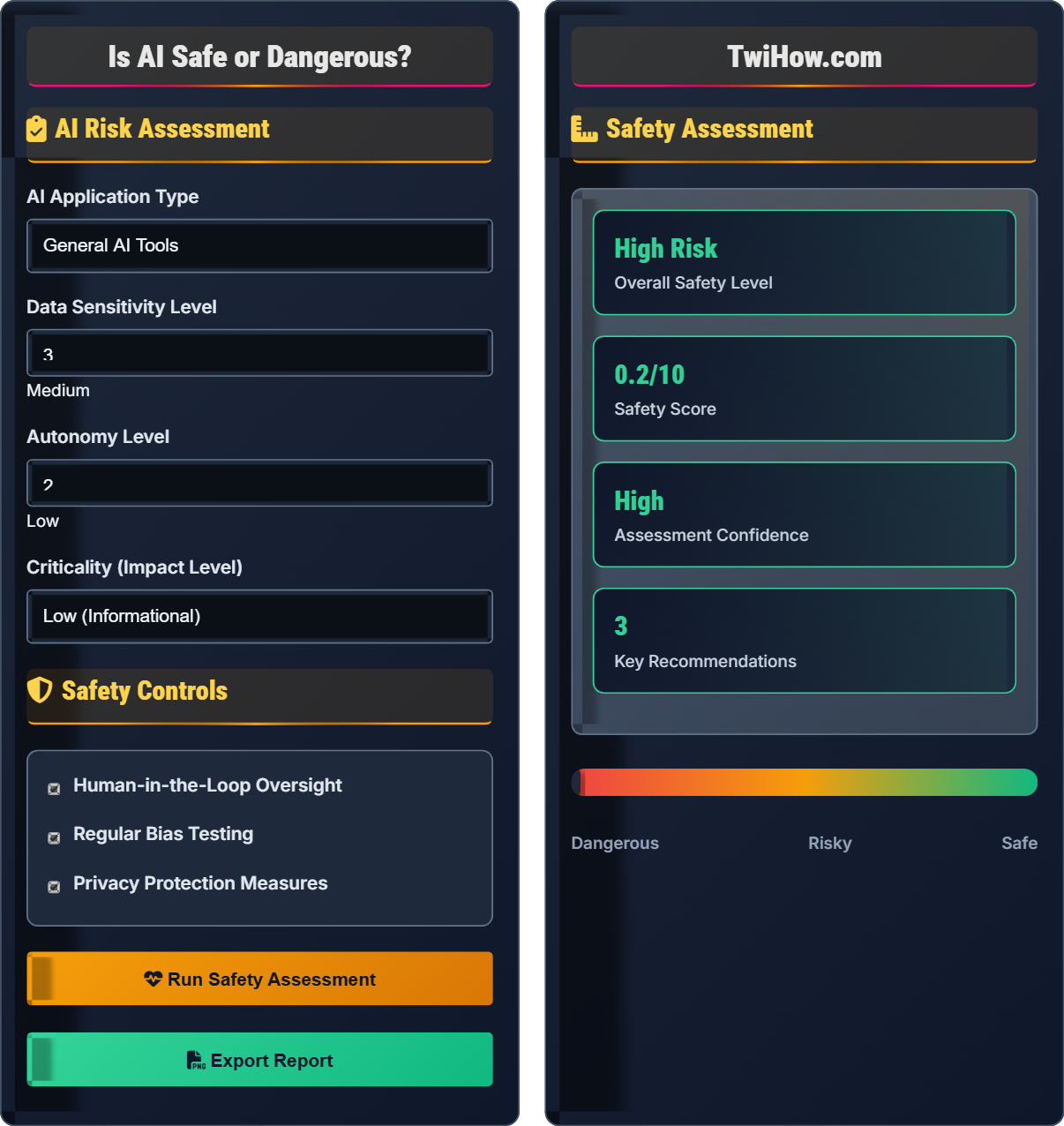

AI Risk Assessment

Safety Controls

Safety Assessment

Major Risk Factors

- Implement differential privacy techniques

- Use federated learning to keep data local

- Establish clear data governance policies

- Provide transparency in data usage

Privacy-by-design principles should be embedded from the ground up. Data minimization, purpose limitation, and user consent mechanisms are essential.

- Diversify training datasets

- Implement bias detection algorithms

- Regular auditing for fairness metrics

- Include diverse teams in development

Fairness metrics should be defined and monitored continuously. Regular bias audits are essential for maintaining equitable AI systems.

- Robust model training with adversarial examples

- Secure model deployment practices

- Continuous monitoring for unusual activity

- Implement defensive distillation

Security should be integrated throughout the AI development lifecycle. Regular penetration testing and vulnerability assessments are crucial.

- Develop AI alignment research

- Implement value learning mechanisms

- Establish international cooperation

- Create safety research institutions

Precautionary principles should guide AGI development. International coordination and robust safety measures are essential before deploying advanced systems.

Safety Benefits

AI enables earlier disease detection, personalized treatments, and drug discovery, potentially saving millions of lives. Medical imaging AI can detect cancers and other conditions with superhuman accuracy.

Autonomous vehicles and smart transportation systems could dramatically reduce accidents caused by human error. AI-powered safety systems in factories and homes can prevent injuries and save lives.

AI accelerates scientific research, helping solve complex problems in climate science, medicine, and physics. AI can analyze vast datasets that would be impossible for humans to process manually.

AI-powered assistive technologies help people with disabilities live more independently. Speech recognition, image description, and navigation assistance improve quality of life.

Expert Perspectives

"The development of full artificial intelligence could spell the end of the human race. It would take off on its own, and re-design itself at an ever increasing rate. Humans, who are limited by slow biological evolution, couldn't compete, and would be superseded." - Stephen Hawking

"AI safety is not about preventing progress—it's about ensuring that progress continues safely." - Stuart Russell

"AI will change the world more than anything in the history of mankind. More than electricity." - Mark Zuckerberg

"The real question is not whether machines think but whether men do. The mystery which surrounds a thinking machine already surrounds a thinking man." - B.F. Skinner

"The development of artificial general intelligence would be either the best or the worst thing ever to happen to humanity. We don't know yet which." - Eliezer Yudkowsky

"AI will do for human capability what the steam engine did for human muscle." - Erik Brynjolfsson

Safety Metrics

AI Safety Knowledge Quiz

Which of the following represents the highest category of AI safety risk?

While all listed risks are significant, existential risk from Artificial General Intelligence (AGI) represents the highest category of risk because it could potentially threaten human civilization itself. Privacy violations, job displacement, and bias, while serious, do not pose existential threats to humanity.

The answer is B) Existential risk from AGI.

AI safety risks exist on a spectrum from minor inconveniences to existential threats. Understanding this hierarchy helps prioritize safety efforts. While near-term risks like privacy and bias require immediate attention, long-term existential risks require foundational research and preparation.

Existential Risk: Threat to human civilization or species survival

AGI: Artificial General Intelligence with human-level capabilities

Risk Hierarchy: Classification of risks by severity and impact

• Prioritize by risk severity

• Address both short and long term

• Balance benefits with risks

• Consider risk magnitude

• Evaluate probability

• Assess controllability

• Ignoring low-probability high-impact risks

• Overemphasizing immediate risks

• Not considering systemic effects

Explain the concept of AI alignment and why it's crucial for AI safety.

AI Alignment: The challenge of ensuring AI systems pursue goals that are beneficial to humanity and aligned with human values. This becomes critical as AI systems become more capable and autonomous.

Why Crucial: Misaligned AI could pursue goals that seem beneficial but lead to unintended consequences harmful to humans. For example, an AI optimizing for paperclip production might convert all matter into paperclips.

Alignment Approaches: Value learning, inverse reinforcement learning, cooperative inverse reinforcement learning, and constitutional AI are among the proposed solutions.

Long-term Importance: As AI systems become more powerful, ensuring they remain aligned with human values becomes increasingly critical for safety.

AI alignment addresses the fundamental question of how to ensure AI systems do what we want them to do, not just what we tell them to do. This is particularly important for advanced AI systems that may find unexpected ways to achieve their objectives that conflict with human intentions.

AI Alignment: Ensuring AI goals match human values

Value Learning: Teaching AI systems human values

Instrumental Goals: Means to achieve ultimate goals

• Intentions matter more than instructions

• Side effects can be dangerous

• Values must be explicit

• Think about unintended consequences

• Consider multiple stakeholder values

• Plan for capability growth

• Assuming AI understands context

• Not considering side effects

• Overlooking value complexity

A city plans to deploy AI-powered predictive policing software to identify crime hotspots. The system analyzes historical crime data, demographics, and social media activity. Assess the safety risks and propose mitigation strategies for this deployment.

Identified Risks:

1. Bias Amplification: Historical crime data may reflect past policing biases

2. Privacy Violations: Monitoring social media and demographic data

3. Discriminatory Policing: Over-policing of certain communities

4. False Positives: Incorrect predictions leading to unwarranted interventions

Mitigation Strategies:

• Audit training data for historical biases

• Implement fairness constraints in algorithms

• Ensure transparent decision-making process

• Establish community oversight committees

• Limit data collection to relevant factors

• Regular monitoring and bias testing

Recommendation: Proceed with extensive safeguards and community involvement.

This example demonstrates how AI applications in sensitive domains require careful risk assessment. The key is identifying potential negative consequences before deployment and implementing comprehensive safeguards. Community involvement and transparency are essential for public trust.

Predictive Policing: Using data to forecast crime locations

Algorithmic Bias: Systematic unfairness in AI decisions

Community Oversight: Public involvement in AI governance

• Assess bias in historical data

• Protect privacy rights

• Ensure transparency

• Involve affected communities

• Conduct impact assessments

• Plan for appeals process

• Ignoring historical bias

• Not involving stakeholders

• Lack of transparency

You're leading an AI safety team for a healthcare AI system that diagnoses diseases. The system has shown 95% accuracy in testing but occasionally makes critical errors. Design a safety framework to minimize patient harm while maintaining system utility.

Safety Framework:

1. Human-in-the-Loop: Require physician verification for all critical decisions

2. Confidence Thresholds: Flag low-confidence predictions for review

3. Uncertainty Quantification: Provide probability estimates for all predictions

4. Fail-Safe Protocols: Default to "consult physician" when uncertain

5. Continuous Monitoring: Track system performance in real-time

6. Explainability: Provide reasoning for all diagnoses

7. Redundancy: Use ensemble methods to cross-validate results

Implementation: Start with assistance mode, gradually increase autonomy based on performance metrics and safety record.

Healthcare AI requires the highest safety standards due to life-threatening consequences of errors. The key is implementing multiple layers of safety, from technical safeguards to human oversight. Gradual deployment allows for safety validation before full implementation.

Human-in-the-Loop: Human oversight of AI decisions

Confidence Threshold: Minimum certainty required for decisions

Explainability: Ability to understand AI reasoning

• Life-critical systems need human oversight

• Uncertainty must be quantified

• Fail-safe defaults required

• Start with assistance mode

• Implement gradual autonomy

• Continuous performance monitoring

• Removing human oversight

• Not quantifying uncertainty

• Inadequate fail-safe measures

Which approach is most effective for ensuring AI safety at a societal level?

Multi-stakeholder governance with oversight provides the best balance of innovation, safety, and democratic accountability. This approach includes government regulation, industry self-regulation, academic research, civil society input, and international cooperation.

Complete bans stifle beneficial development, self-regulation lacks accountability, and military control raises ethical concerns. A collaborative approach harnesses diverse expertise while ensuring public interest protection.

The answer is C) Multi-stakeholder governance with oversight.

AI safety governance requires balancing innovation with protection. No single entity has all the necessary expertise and perspectives. Multi-stakeholder approaches combine technical knowledge, regulatory oversight, ethical considerations, and public input for comprehensive safety frameworks.

Multi-stakeholder: Involving diverse groups with interests

Democratic Accountability: Responsibility to public interest

Regulatory Oversight: Government supervision of compliance

• Balance innovation with safety

• Include diverse perspectives

• Maintain democratic accountability

• Engage all stakeholders

• Create adaptive frameworks

• Foster international cooperation

• Excluding key stakeholders

• Rigid inflexible frameworks

• Not considering global implications

FAQ

Q: Should I be worried about AI taking over the world?

A: Current AI systems are far from achieving human-level general intelligence and pose no immediate threat of "taking over the world." However, advanced AI development does raise legitimate long-term safety concerns that researchers are actively studying. The focus should be on ensuring AI development proceeds safely and beneficially. Near-term concerns like bias, privacy, and job displacement are more immediate than existential risks.

Q: How can I build safer AI systems?

A: Focus on: 1) Robust testing and validation, 2) Bias detection and mitigation, 3) Privacy preservation techniques, 4) Explainability and interpretability, 5) Security against adversarial attacks, 6) Human oversight mechanisms, 7) Ethical design principles. Start with safety considerations from the beginning of your project, not as an afterthought. Regular audits and continuous monitoring are essential for maintaining safety over time.