What is AI and How Does it Work?

Complete AI guide • Step-by-step explanations

AI Fundamentals:

Show AI SimulatorArtificial Intelligence (AI) is the simulation of human intelligence processes by machines, especially computer systems. These processes include learning (the acquisition of information and rules for using the information), reasoning (using rules to reach approximate or definite conclusions), and self-correction.

At its core, AI works by combining large amounts of data with fast, iterative processing and intelligent algorithms, allowing the software to learn automatically from patterns or features in the data.

Key AI concepts:

- Machine Learning: Algorithms that improve through experience

- Neural Networks: Systems modeled after the human brain

- Data Processing: Pattern recognition and decision-making

- Automation: Performing tasks typically done by humans

Modern AI systems use neural networks with multiple layers (deep learning) to recognize patterns, make predictions, and generate responses. They process vast amounts of data to identify complex relationships that would be impossible for humans to detect manually.

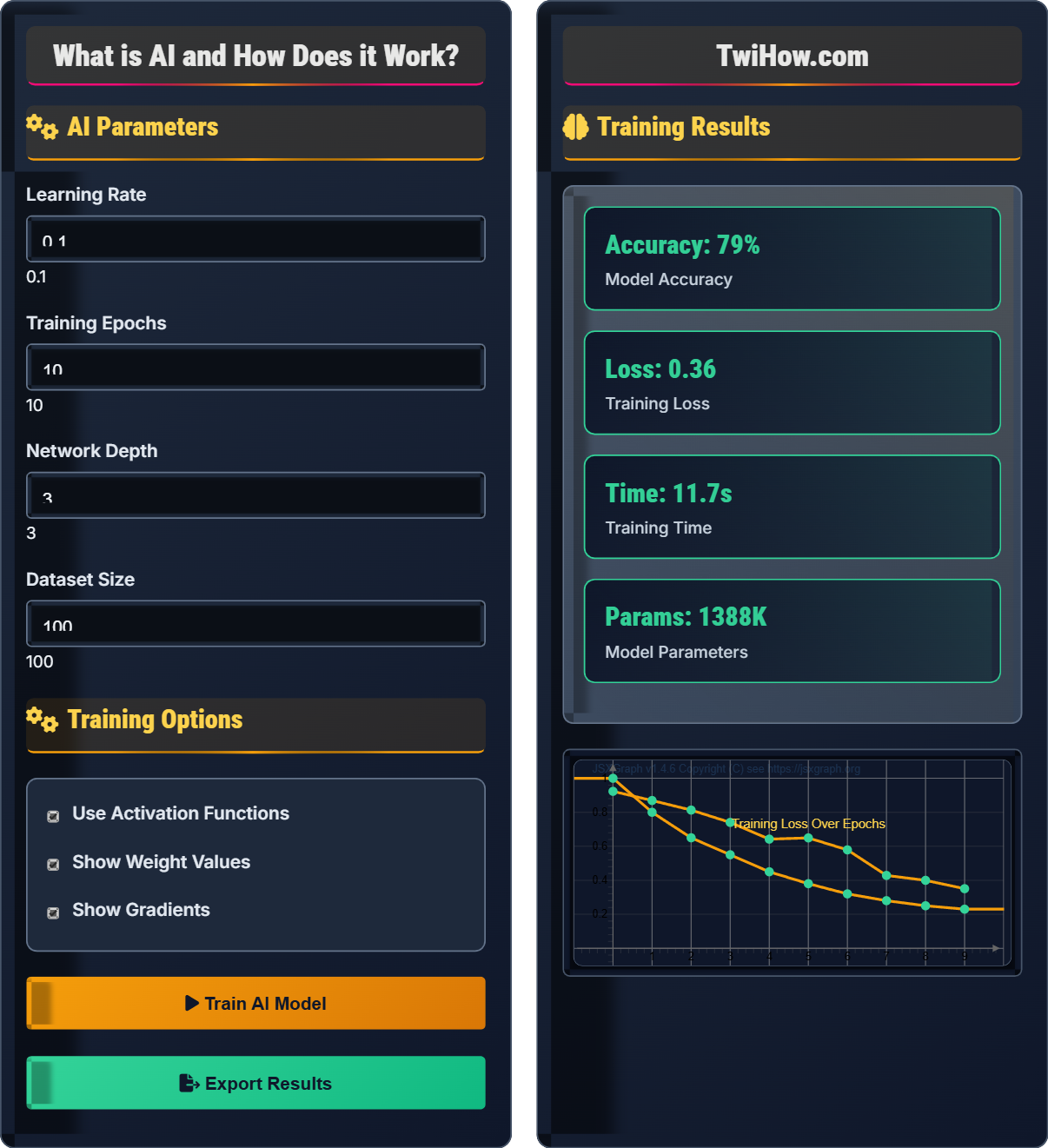

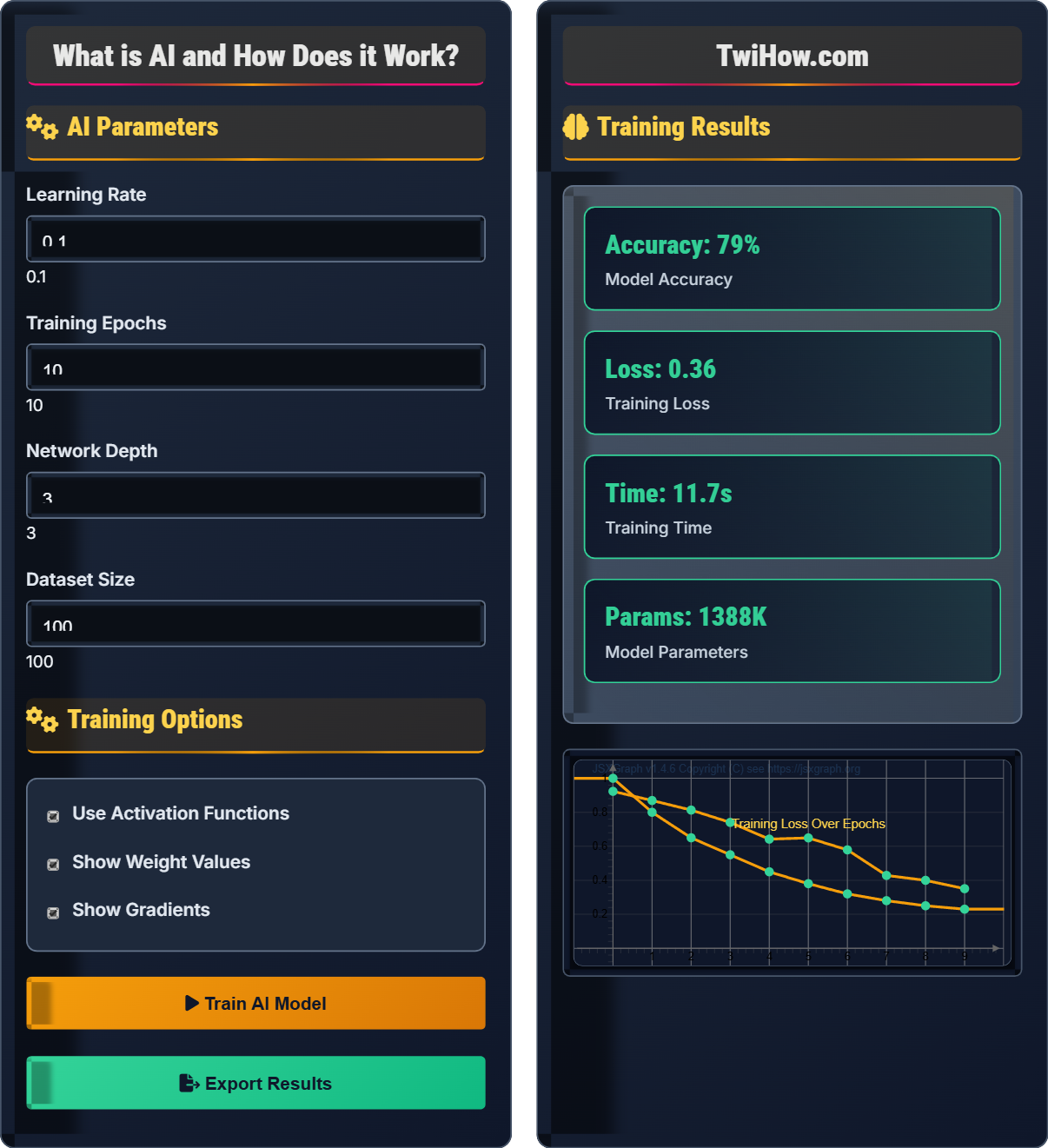

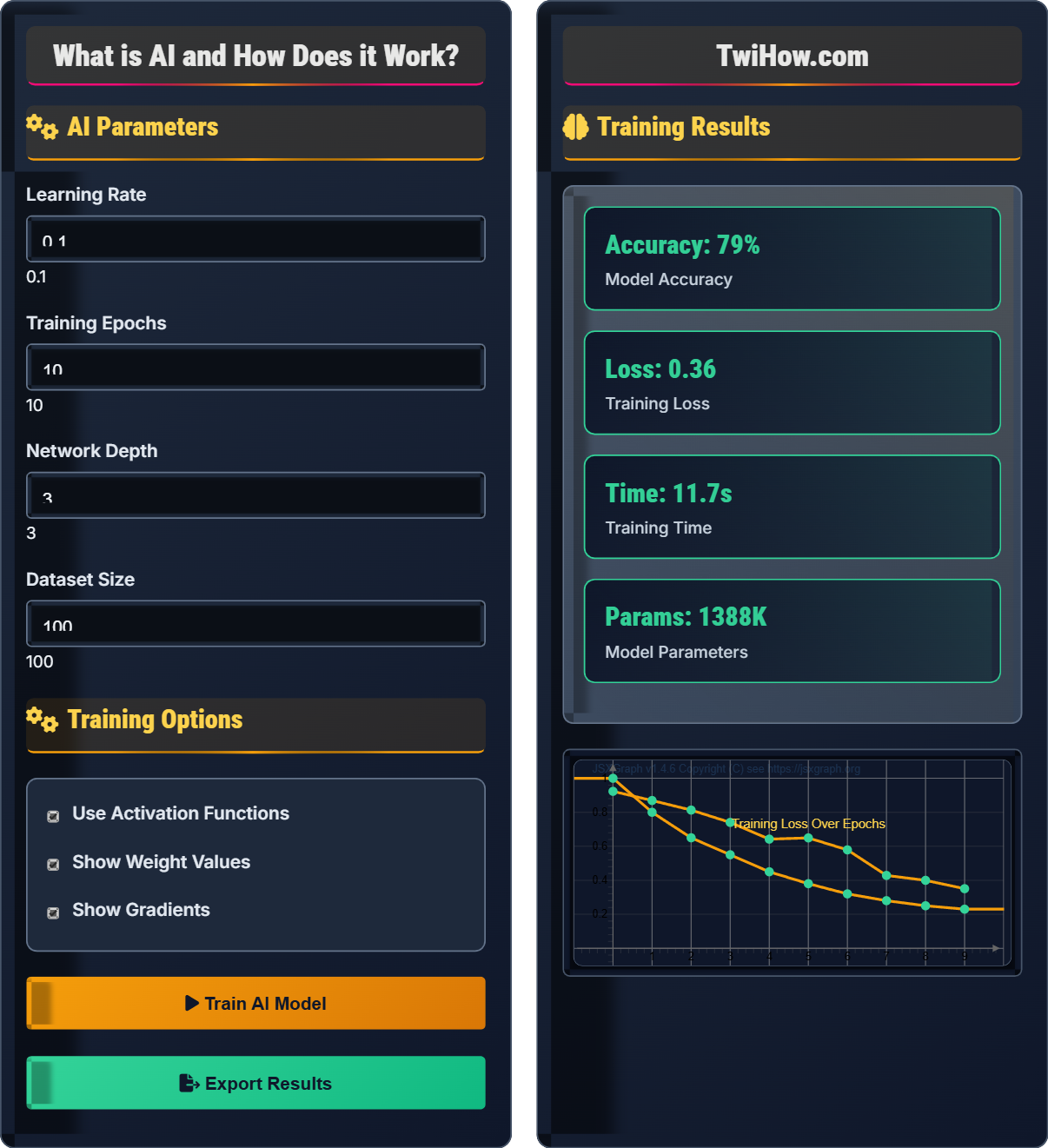

AI Parameters

Training Options

Training Results

| Epoch | Accuracy | Loss | Parameters |

|---|---|---|---|

| 1 | 23% | 0.89 | 1.2K |

| 2 | 45% | 0.72 | 1.2K |

| 3 | 67% | 0.54 | 1.2K |

| 4 | 78% | 0.38 | 1.2K |

| 5 | 85% | 0.23 | 1.2K |

How AI Works Explained

Artificial Intelligence (AI) is the simulation of human intelligence processes by machines, especially computer systems. These processes include learning (the acquisition of information and rules for using the information), reasoning (using rules to reach approximate or definite conclusions), and self-correction. AI systems perform tasks that typically require human intelligence, such as visual perception, speech recognition, decision-making, and language translation.

Neural networks are computing systems inspired by the human brain. They consist of interconnected nodes (neurons) organized in layers:

Where:

- Input Layer: Receives raw data

- Hidden Layers: Process information through weighted connections

- Output Layer: Produces final results

- Weights & Biases: Parameters adjusted during training

Key areas where AI is transforming industries:

- Healthcare: Medical diagnosis, drug discovery, personalized treatment

- Finance: Fraud detection, algorithmic trading, risk assessment

- Transportation: Autonomous vehicles, traffic optimization

- Retail: Recommendation engines, inventory management

- Education: Personalized learning, automated grading

- Security: Facial recognition, threat detection

- Narrow AI: Specialized for specific tasks (virtual assistants, image recognition)

- General AI: Hypothetical AI with human-like cognitive abilities

- Machine Learning: Algorithms that improve through experience

- Deep Learning: Neural networks with multiple layers

- Natural Language Processing: Understanding and generating human language

AI Fundamentals

Neural networks, machine learning, deep learning, natural language processing.

Output = ActivationFunction(Σ(Weight × Input) + Bias)

Where Output = neuron response, Weight = connection strength, Input = signal value.

- Data quality affects model performance

- More layers enable complex pattern recognition

- Training requires labeled examples

Applications

Image recognition, natural language processing, autonomous vehicles, recommendation systems.

- Healthcare diagnosis

- Financial fraud detection

- Customer service chatbots

- Content personalization

- Ethics and bias mitigation

- Privacy and security

- Regulatory compliance

- Human-AI collaboration

AI Learning Quiz

Which of the following is NOT a typical component of a neural network?

Neural networks consist of neurons (nodes), weights (connections between nodes), and activation functions (that determine output). Quantum gates are components of quantum computers, not classical neural networks.

The answer is D) Quantum Gates.

Understanding the basic components of neural networks is fundamental to grasping how AI learns. Each neuron receives inputs, applies weights to them, sums them up, adds a bias, and passes the result through an activation function to produce an output. This process is repeated across multiple layers to extract increasingly abstract features from the input data.

Neuron: Basic unit of a neural network that processes information

Weight: Parameter that determines the strength of connection between neurons

Activation Function: Mathematical function that determines output of a neuron

• Neurons are connected by weighted links

• Weights are adjusted during training

• Activation functions introduce non-linearity

• Think of neurons as decision-making units

• Weights represent learned knowledge

• Activation functions allow complex pattern recognition

• Confusing neural networks with quantum computing

• Thinking neurons are biological cells

• Ignoring the importance of activation functions

Explain the difference between training and inference phases in machine learning. Why is this distinction important?

Training Phase: The model learns from labeled data by adjusting its internal parameters to minimize prediction errors. During this phase, the algorithm uses known inputs and outputs to "learn" patterns.

Inference Phase: The trained model makes predictions on new, unseen data using the parameters learned during training. No further learning occurs.

This distinction is important because mixing training and testing data leads to overfitting, where the model performs well on known data but poorly on new data.

The training-inference cycle is fundamental to machine learning. During training, the system learns from examples, similar to how humans learn from practice. During inference, the system applies its learned knowledge to new situations. Keeping these phases separate ensures that models generalize well to new data rather than just memorizing training examples.

Training: Learning phase where model parameters are adjusted

Inference: Prediction phase using learned parameters

Overfitting: Model performs well on training data but poorly on new data

• Never train and test on the same data

• Separate training and testing datasets

• Monitor for overfitting during training

• Use validation sets to monitor training progress

• Apply regularization to prevent overfitting

• Cross-validation improves reliability

• Testing on training data

• Not monitoring for overfitting

• Using too little training data

A medical imaging company wants to develop an AI system to detect early-stage lung cancer from CT scans. Describe the AI pipeline they would need to implement, including data requirements, model architecture considerations, and ethical implications.

Data Requirements: Thousands of labeled CT scans (with and without cancer), patient demographics, clinical notes. Data must be anonymized and diverse.

Model Architecture: Convolutional Neural Network (CNN) suitable for image analysis, possibly with attention mechanisms to highlight suspicious regions.

Ethical Implications: High accuracy critical due to life-or-death decisions, potential bias against demographic groups, explainability for doctors, regulatory approval needed.

Pipeline: Data preprocessing → Model training → Validation → Clinical trials → Deployment with continuous monitoring.

Medical AI applications represent some of the most challenging and important uses of AI. The high stakes require exceptional accuracy, robust validation, and careful consideration of ethical implications. Unlike other applications, medical AI must meet strict regulatory standards and maintain explainability for healthcare professionals who need to understand AI decisions.

Convolutional Neural Network (CNN): Deep learning model designed for image processing

Explainable AI: Systems that provide insight into their decision-making process

FDA Approval: Regulatory clearance required for medical devices

• Medical AI requires extensive validation

• Patient privacy must be maintained

• Models must be interpretable to doctors

• Collect diverse datasets to avoid bias

• Implement uncertainty quantification

• Plan for continuous learning from new cases

• Insufficient validation for medical applications

• Ignoring demographic biases in training data

• Failing to consider legal liability issues

An AI hiring system shows preference for male candidates over female candidates despite gender-neutral job descriptions. Explain how this bias could occur and propose three technical solutions to mitigate it.

How Bias Occurs: Historical hiring data may contain gender bias, causing the AI to learn discriminatory patterns. The model might associate certain qualifications with gender even when not explicitly stated.

Technical Solutions:

1. Debiasing Training Data: Remove or adjust historical biases in training data

2. Fairness Constraints: Add mathematical constraints during training to enforce equal treatment

3. Adversarial Debiasing: Train a secondary network to remove sensitive attributes from representations

Regular audits and fairness metrics are essential to ensure continued unbiased performance.

AI bias is a critical issue that stems from biased training data reflecting historical discrimination. Since AI systems learn patterns from data, they can perpetuate and amplify existing societal biases. Addressing bias requires proactive measures during data collection, model training, and deployment phases. This challenge highlights the importance of diverse development teams and inclusive datasets.

Algorithmic Bias: Systematic discrimination by AI systems

Fairness Constraints: Mathematical conditions to ensure equitable outcomes

Adversarial Debiasing: Technique to remove sensitive attributes from AI representations

• AI reflects biases in training data

• Regular bias audits are necessary

• Fairness must be considered from start

• Audit datasets for representation gaps

• Use fairness-aware algorithms

• Involve diverse stakeholders in development

• Assuming AI is inherently unbiased

• Not auditing for disparate impact

• Relying solely on accuracy metrics

Which of the following represents a fundamental limitation of current AI systems?

Current AI systems excel at processing large datasets, recognizing complex patterns, and operating efficiently. However, they lack common sense reasoning - the ability to apply general knowledge and intuitive understanding that humans possess. AI systems struggle with abstract reasoning, understanding context beyond training data, and transferring knowledge across domains.

The answer is B) Lack of common sense reasoning.

Despite remarkable achievements in narrow domains, current AI systems lack the general intelligence and common sense that humans naturally possess. They operate within the boundaries of their training data and struggle with situations requiring abstract reasoning or knowledge transfer. This limitation highlights the distinction between narrow AI (task-specific) and general AI (human-like intelligence), which remains theoretical.

Common Sense Reasoning: Ability to apply intuitive knowledge about the world

Narrow AI: AI specialized for specific tasks

General AI: Hypothetical AI with human-like cognitive abilities

• Current AI lacks general intelligence

• Domain-specific performance varies

• Context understanding remains limited

• Understand AI capabilities and limitations

• Design systems with human oversight

• Expect failures outside training domain

• Overestimating AI capabilities

• Assuming AI understands context

• Ignoring failure modes

FAQ

Q: How do AI systems actually "learn" from data?

A: AI systems "learn" through a process called training, where they adjust internal parameters to minimize errors in predictions. In neural networks, this involves:

1. Forward Pass: Input data flows through the network producing an output

2. Error Calculation: The difference between predicted and actual output is computed

3. Backpropagation: The error is propagated backward through the network

4. Parameter Update: Weights and biases are adjusted using optimization algorithms like gradient descent

This process repeats thousands of times, gradually improving the model's accuracy. The "learning" is essentially the systematic adjustment of parameters to reduce prediction errors.

Q: What's the difference between AI, machine learning, and deep learning?

A: These terms represent different levels of specificity in artificial intelligence:

AI (Artificial Intelligence): The broadest concept - any system that exhibits human-like intelligence behavior. This includes everything from simple rule-based systems to complex neural networks.

ML (Machine Learning): A subset of AI that focuses on systems that can learn and improve from experience without being explicitly programmed. ML algorithms build models from sample data.

DL (Deep Learning): A specialized subset of ML using neural networks with multiple layers (hence "deep"). DL excels at automatically discovering relevant features from raw data.

Think of it as nested circles: Deep Learning ⊂ Machine Learning ⊂ Artificial Intelligence.