What is the Future of AI?

Complete analysis • Predictions • Timelines • Scenarios

AI Evolution Timeline:

Explore TimelineThe future of AI involves multiple evolutionary phases: continued advancement in large language models, emergence of artificial general intelligence (AGI), integration into everyday life, and potential transformative impacts on society and economy.

Key development phases:

- 2024-2027: Enhanced language models, specialized AI systems

- 2027-2030: Early AGI capabilities, widespread automation

- 2030-2035: AGI emergence, human-AI collaboration

- 2035-2040: Post-AGI society, transformative changes

Success will depend on responsible development, ethical frameworks, and beneficial alignment with human values.

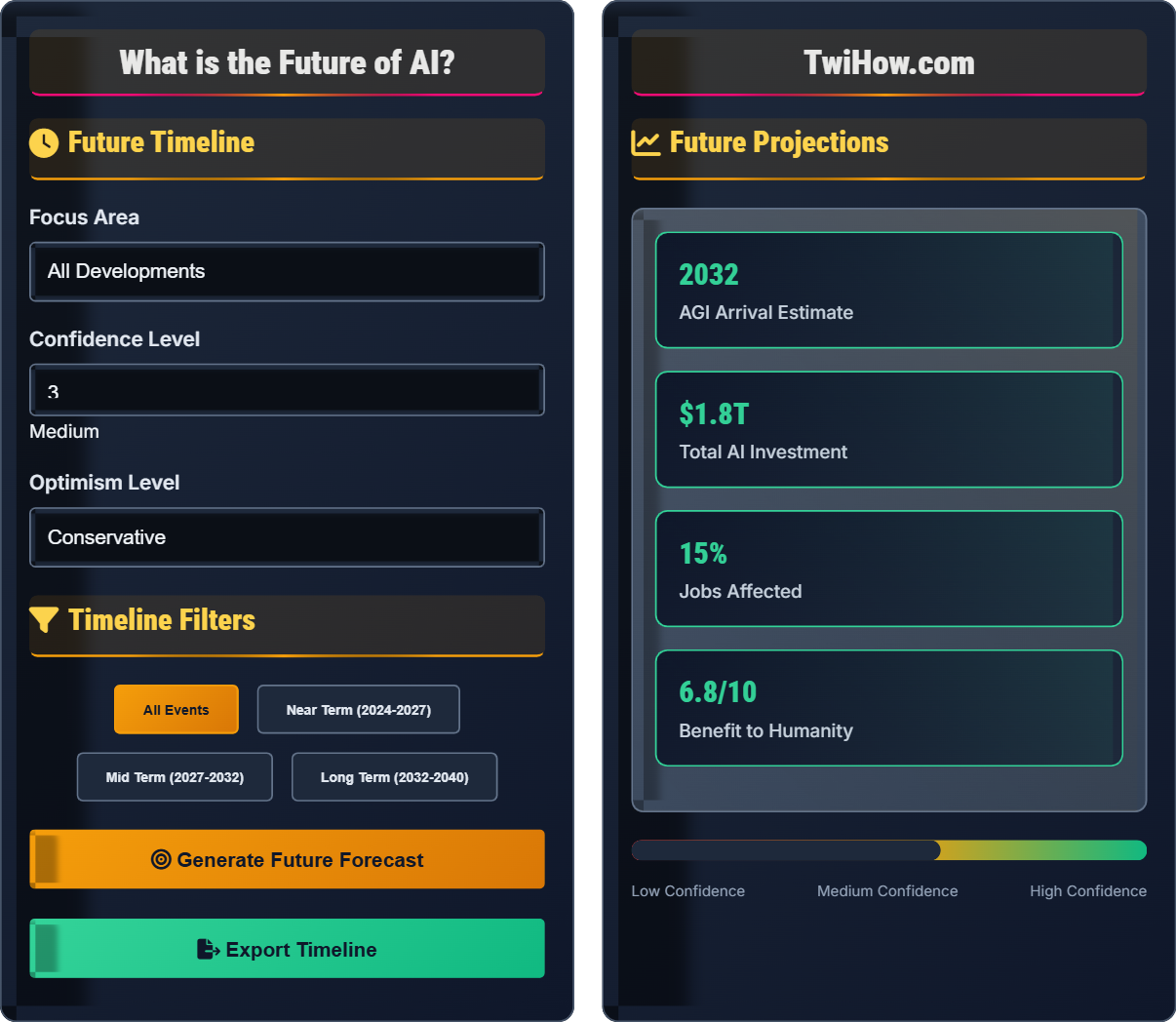

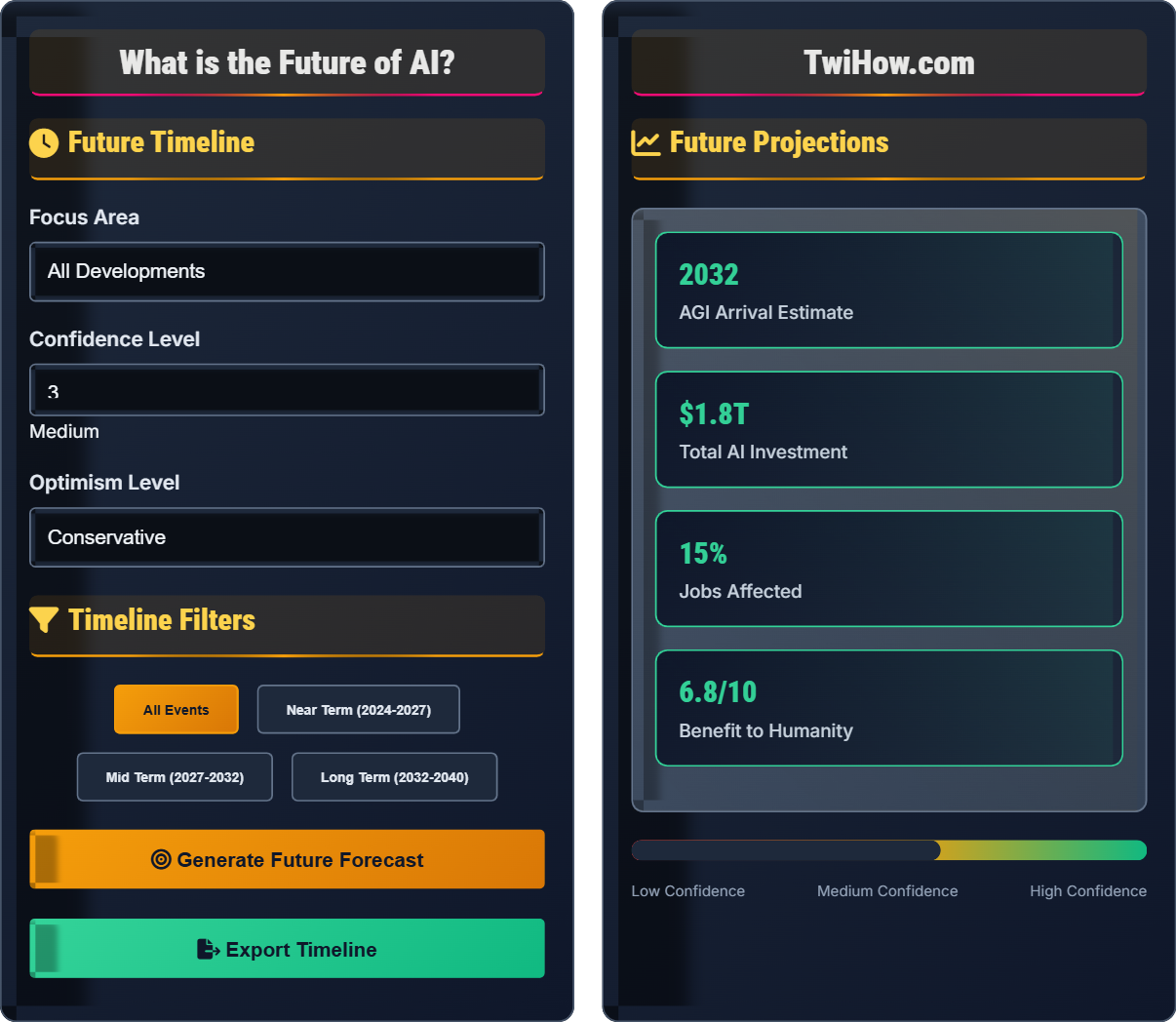

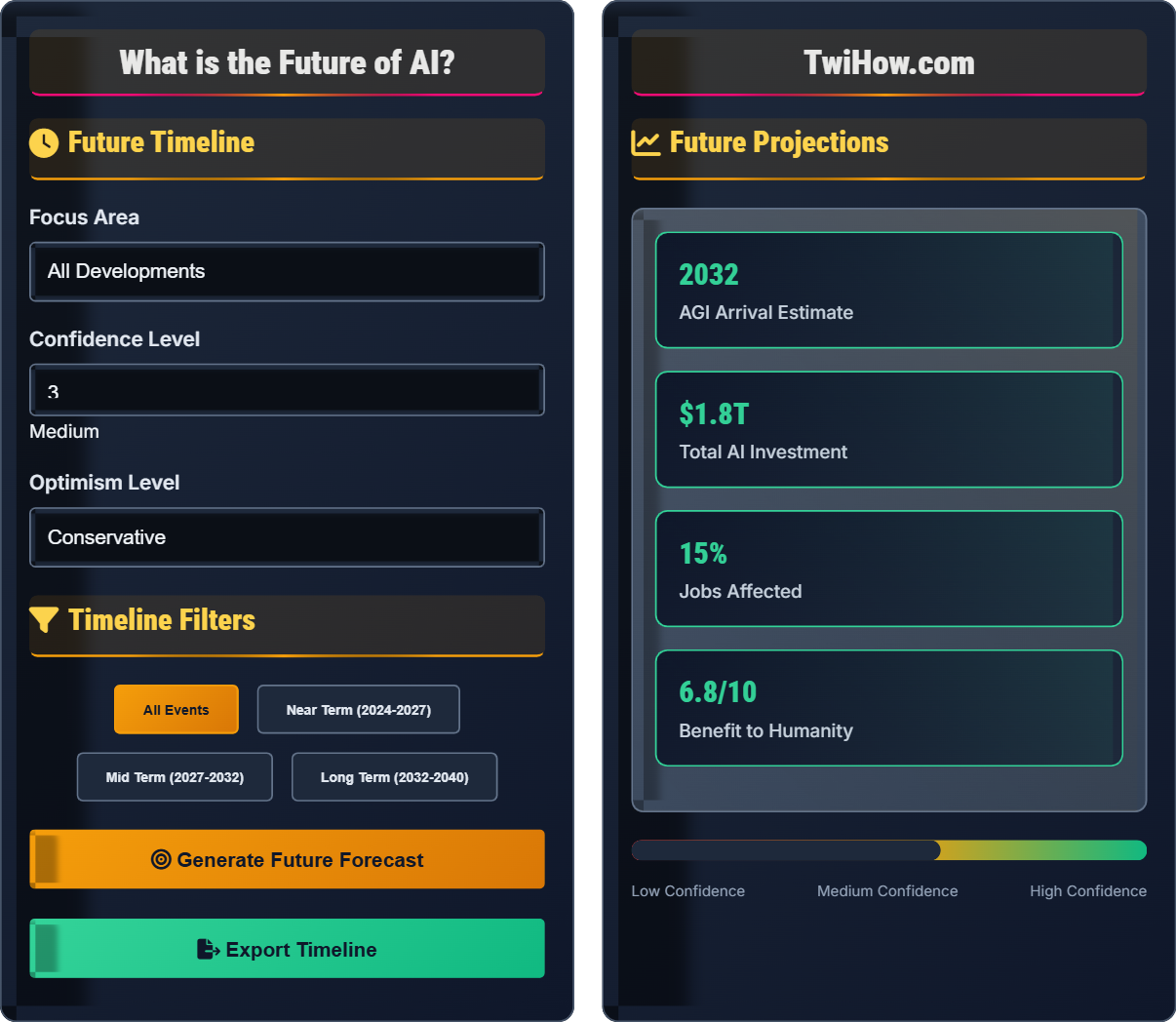

Future Timeline

Timeline Filters

Future Projections

AI Evolution Timeline

Developments: Large language models reach human-level performance in many domains, multimodal AI becomes mainstream, and specialized AI systems excel in specific tasks.

Impacts: Significant automation in knowledge work, enhanced human productivity, and new forms of human-AI collaboration.

Challenges: Misinformation, job displacement, and ethical concerns around AI-generated content.

Developments: Emergence of artificial general intelligence systems that can perform most human cognitive tasks at or above human level.

Impacts: Rapid acceleration of technological progress, transformation of economic structures, and fundamental changes in how society operates.

Challenges: Ensuring beneficial alignment, managing economic disruption, and maintaining human agency in decision-making.

Developments: Seamless integration of AI systems with human cognition and decision-making processes, emergence of hybrid intelligence.

Impacts: Enhanced human capabilities, solving complex global challenges, and new forms of creativity and innovation.

Challenges: Maintaining human identity and purpose, managing dependency on AI systems, and ensuring equitable access.

Developments: Potential emergence of superintelligent AI systems that surpass human intelligence in all domains.

Impacts: Fundamental transformation of civilization, potential solutions to existential risks, and unprecedented opportunities or challenges.

Challenges: Ensuring beneficial outcomes, managing superintelligence alignment, and preserving human values and agency.

Technology Roadmap

Neuromorphic Computing: AI hardware that mimics the human brain, enabling more efficient and powerful AI systems.

Quantum AI: Integration of quantum computing with AI to solve previously intractable problems.

Brain-Computer Interfaces: Direct neural connections enabling seamless human-AI interaction.

Self-Improving Systems: AI that can recursively improve its own capabilities and architecture.

Embodied AI: Physical AI systems with human-like sensory and motor capabilities.

LLMs

AGI

ASI

Expert Predictions

Prediction: AGI by 2029, followed by technological singularity by 2045.

Rationale: Exponential growth in computing power and AI capabilities following historical trends.

Confidence: High, based on consistent technological progress patterns.

Prediction: AGI possible but alignment challenges must be solved first.

Rationale: Technical feasibility is high, but ensuring beneficial outcomes is critical.

Confidence: Medium, contingent on safety research progress.

Prediction: Focus on narrow AI applications rather than AGI.

Rationale: Practical applications will drive most value in the near term.

Confidence: High, based on current market trends.

Future Scenarios

Description: AI systems are developed with strong safety measures and aligned with human values, enhancing human capabilities and solving global challenges.

Conditions: Adequate safety research, international cooperation, and ethical development practices.

Outcomes: Improved quality of life, scientific breakthroughs, and sustainable development.

Description: Rapid AI development leads to significant economic and social disruption with uneven benefits.

Conditions: Accelerated development without adequate preparation or safety measures.

Outcomes: Job displacement, inequality, and potential social unrest requiring major adaptations.

Description: Superintelligent AI emerges with capabilities far exceeding human intelligence.

Conditions: Breakthrough in AGI leading to recursive self-improvement.

Outcomes: Either utopian transformation or existential risk depending on alignment success.

Future Forecast

- Alignment failure in advanced AI systems

- Concentration of AI capabilities and power

- Insufficient safety research and testing

- Geopolitical competition and arms races

- Loss of human agency and meaningful work

AI Future Knowledge Quiz

According to most AI experts, when is artificial general intelligence (AGI) most likely to arrive?

Most AI experts predict AGI will arrive between 2025-2030, with median predictions clustering around 2029-2030. This is based on surveys of AI researchers and the accelerating pace of AI development, though there is significant uncertainty and disagreement among experts.

The answer is B) 2025-2030.

Expert predictions about AGI timelines are based on analysis of current technological trends, computational requirements, and historical precedent for technological development. However, AGI represents a qualitative leap that may not follow historical patterns, introducing significant uncertainty into predictions.

AGI: Artificial General Intelligence with human-level cognitive capabilities

Timeline Predictions: Estimates of when technological milestones will occur

Expert Surveys: Studies of researcher predictions about AI development

• AGI represents a qualitative leap

• Predictions have high uncertainty

• Multiple factors influence timing

• Consider multiple expert opinions

• Account for uncertainty ranges

• Monitor technological progress

• Treating predictions as certainties

• Ignoring uncertainty factors

• Not updating beliefs with new evidence

Describe the potential societal impacts of AGI emergence and how society might adapt to these changes.

Positive Impacts: AGI could solve major global challenges like disease, poverty, and climate change. It could accelerate scientific discovery, enhance human creativity, and create unprecedented prosperity.

Negative Impacts: Mass unemployment, concentration of power, loss of human agency, and potential existential risks if AGI is misaligned with human values.

Adaptation Strategies: Universal basic income, redefined work and purpose, new forms of education, international governance frameworks, and human-AI collaboration models.

Transition Challenges: Managing economic disruption, ensuring equitable benefits, maintaining democratic institutions, and preserving human dignity and meaning.

The emergence of AGI represents a potential turning point in human history comparable to the agricultural or industrial revolutions. The key to navigating this transition successfully lies in proactive planning, international cooperation, and ensuring that the benefits of AGI are widely shared while risks are carefully managed.

AGI Emergence: The point when AI achieves human-level general intelligence

Societal Adaptation: Changes in institutions and practices to accommodate new technology

Universal Basic Income: Guaranteed income for all citizens regardless of employment

• Prepare for major disruptions

• Focus on beneficial outcomes

• Maintain human agency

• Start preparations early

• Build resilient institutions

• Foster international cooperation

• Not preparing for disruption

• Ignoring distributional effects

• Assuming gradual transition

If AGI arrives in 2030 and can perform most cognitive work as well as humans, what would be the economic implications and how might society restructure to maintain stability and prosperity?

Economic Implications:

• Massive productivity gains (potentially 10-100x current rates)

• Elimination of most cognitive jobs (80-90% of current workforce)

• Dramatic reduction in cost of goods and services

• Concentration of wealth among AI owners/controllers

Restructuring Strategies:

• Universal Basic Assets: Provide everyone with ownership of AI systems

• Progressive taxation on AI-generated wealth

• New economic models based on human purpose rather than labor

• Focus on human creativity, relationships, and experiences

• Democratic governance of AI systems

Stability Measures: Gradual transition, international coordination, and social safety nets.

This scenario illustrates the fundamental economic transformation AGI could bring. Traditional economics based on scarcity and labor would need to be completely reimagined. The key challenge is ensuring that the abundance created by AGI benefits all of humanity rather than concentrating power among a few.

Universal Basic Assets: Ownership of productive assets for all citizens

Post-Scarcity Economy: Economic system where basic needs are easily met

Human Purpose Economy: Economic system focused on meaning and fulfillment

• Redistribute AI-generated wealth

• Maintain human dignity

• Ensure equitable access

• Start planning now

• Build inclusive institutions

• Focus on human flourishing

• Assuming current economic models persist

• Not planning for job displacement

• Ignoring distributional consequences

As AGI development approaches, what safety measures should be implemented to ensure beneficial outcomes, and how can these be enforced globally?

Safety Measures:

1. Alignment Research: Ensuring AGI systems share human values and goals

2. Control Mechanisms: Technical methods to maintain human oversight

3. Verification & Validation: Rigorous testing before deployment

4. Redundancy Systems: Multiple safety layers and fail-safes

5. International Standards: Global safety protocols and testing requirements

Enforcement Strategies:

• International AI safety treaty with binding commitments

• Global monitoring and verification systems

• Cooperative enforcement mechanisms

• Incentive structures for compliance

• Shared research and development standards

Challenges: Geopolitical competition, enforcement difficulties, and coordination problems.

Ensuring AGI safety requires both technical solutions and international cooperation. The challenge is analogous to nuclear non-proliferation but potentially more complex due to the dual-use nature of AI technology. Success requires unprecedented levels of international coordination and trust.

AI Alignment: Ensuring AI systems pursue beneficial goals

Control Problem: Maintaining human oversight of advanced AI

International Cooperation: Global coordination on safety measures

• Safety before capabilities

• International coordination essential

• Verification required

• Start with voluntary standards

• Build monitoring systems

• Create incentive structures

• Racing to develop AGI

• Not coordinating internationally

• Inadequate safety testing

What is the most critical factor for ensuring that AGI development benefits humanity?

While all factors are important, alignment with human values is the most critical. A technically capable AGI that is not aligned with human values could cause catastrophic harm, regardless of economic incentives or international cooperation. Ensuring that AGI systems pursue goals beneficial to humanity is fundamental to safe development.

The answer is C) Alignment with human values.

This highlights the fundamental challenge of AI safety: ensuring that powerful AI systems pursue goals that are beneficial to humanity. Technical capability alone is insufficient; the system must be designed to optimize for human welfare. This requires deep understanding of human values and sophisticated technical solutions.

Value Alignment: Ensuring AI systems pursue beneficial goals

Human Values: Goals and preferences that promote human welfare

AI Safety: Ensuring beneficial AI development outcomes

• Values alignment is fundamental

• Safety precedes capability

• Human welfare priority

• Invest in alignment research

• Develop verification methods

• Build safety into systems

• Prioritizing capability over safety

• Assuming human-like values

• Not addressing alignment problem

FAQ

Q: Will AI take over all jobs and make humans obsolete?

A: While AI will automate many jobs, especially routine cognitive tasks, it's more likely to augment human capabilities than make humans obsolete. History shows that technological revolutions create new types of jobs even as they eliminate old ones. The key is ensuring that the transition is managed thoughtfully, with investments in education, retraining, and social safety nets to help people adapt to changing economic realities.

Q: How can I prepare for a future with advanced AI?

A: Focus on skills that complement AI: creativity, emotional intelligence, complex problem-solving, and ethical reasoning. Learn to work with AI tools effectively, stay curious and adaptable, and consider interdisciplinary approaches that combine technical skills with domain expertise. Most importantly, develop the ability to think critically about AI's role in society and advocate for beneficial development.