What are Digital Twins?

Complete digital twin technology guide • Step-by-step explanations

Digital Twin Fundamentals:

Show Digital Twin SimulatorDigital twins are virtual replicas of physical objects, systems, or processes that leverage real-time data and simulation to mirror their physical counterparts. They bridge the physical and digital worlds, enabling organizations to monitor, analyze, predict, and optimize performance in ways never before possible.

Key digital twin components:

- Physical Entity: The real-world object being replicated

- Digital Replica: The virtual model with identical properties

- Connection: Real-time data flow between physical and digital

- Simulation: Modeling and predictive analytics capabilities

Digital twins transform industries by providing unprecedented insights, enabling predictive maintenance, optimizing operations, and accelerating innovation cycles.

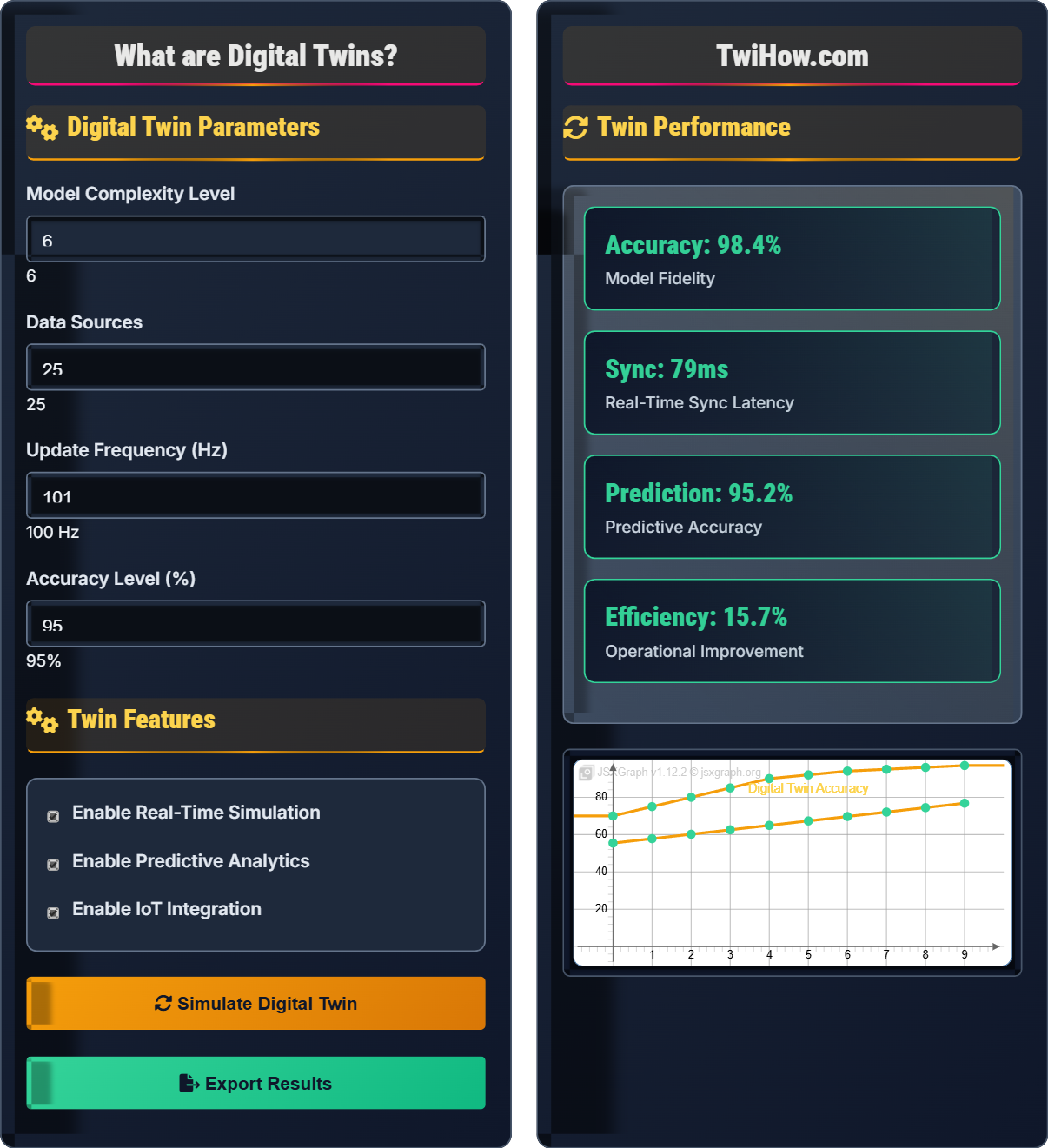

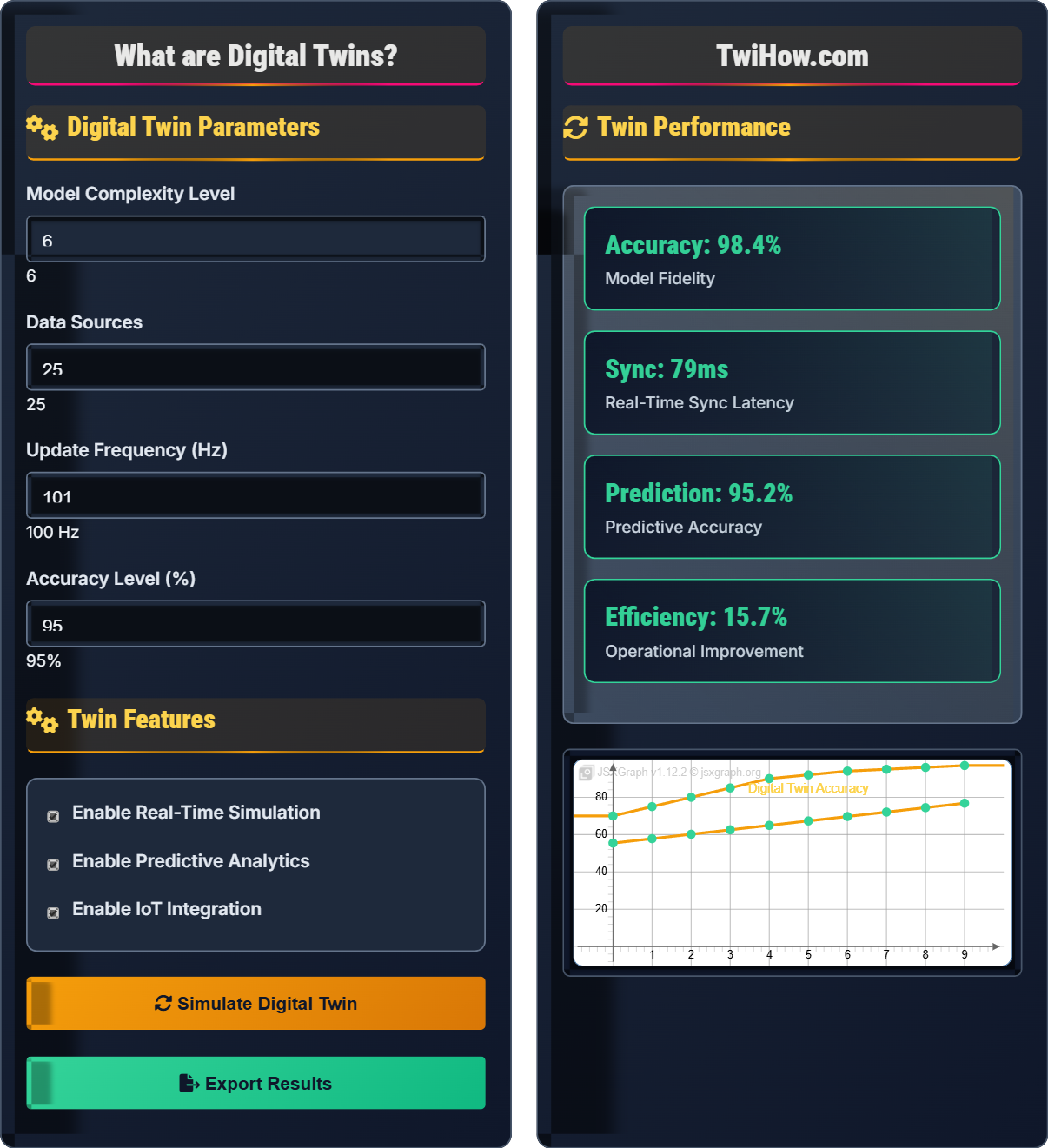

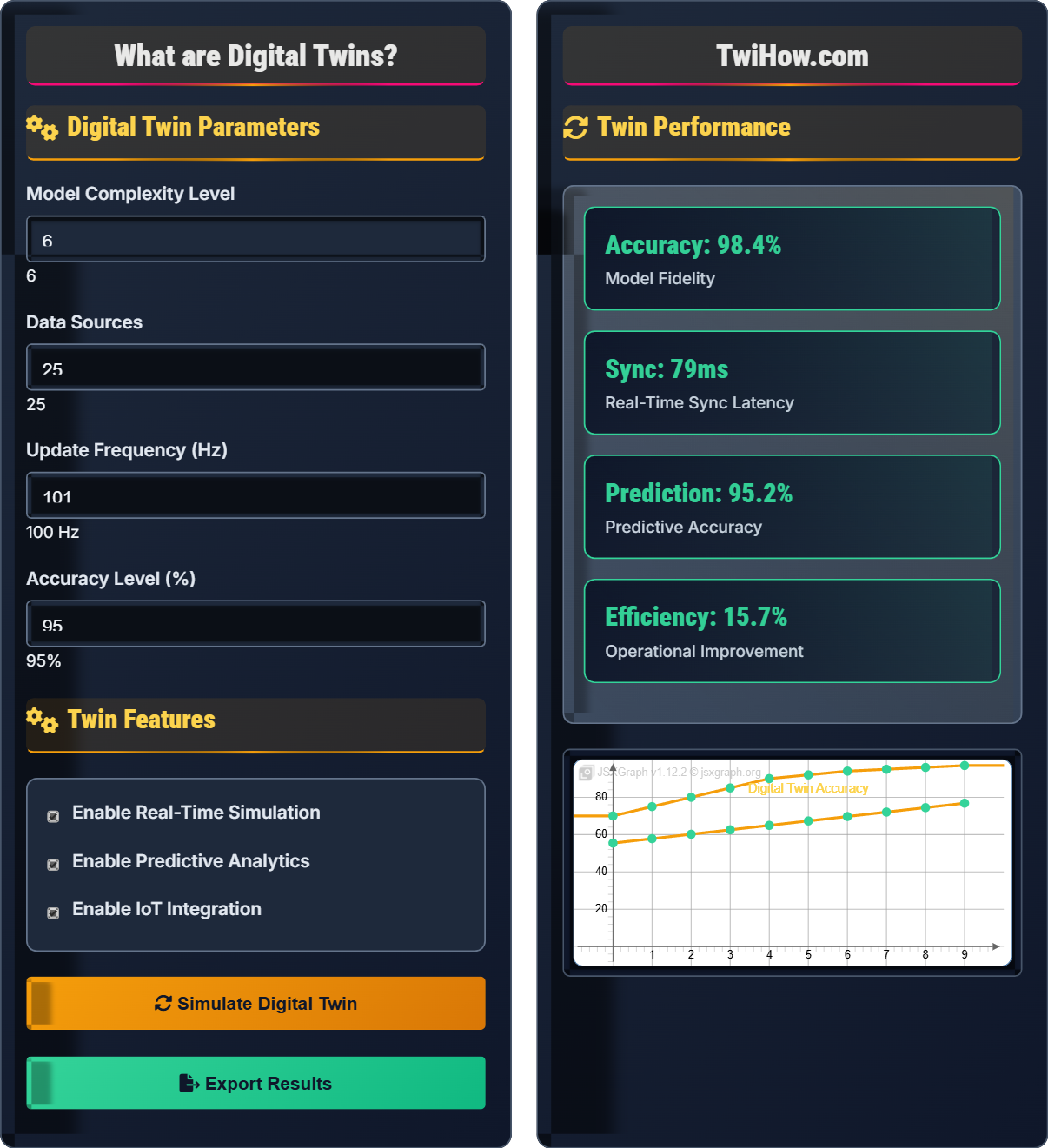

Digital Twin Parameters

Twin Features

Twin Performance

| Component | Status | Accuracy | Latency (ms) |

|---|---|---|---|

| Sensors | Active | 98% | 10 |

| Connectivity | Active | 99% | 15 |

| Modeling | Active | 95% | 20 |

| Analytics | Active | 92% | 25 |

How Digital Twin Technology Works

A digital twin is a virtual representation of a physical object, system, or process that leverages real-time data and simulation to mirror its physical counterpart. It creates a dynamic bridge between the physical and digital worlds, enabling organizations to monitor, analyze, predict, and optimize performance in ways that were previously impossible.

The digital twin architecture consists of three main components:

Where:

- Physical Entity: The real-world object or system

- Digital Replica: Virtual model with identical properties

- Connection Layer: Real-time data flow and synchronization

- Simulation Engine: Modeling and predictive analytics

Digital twins rely on several interconnected technologies:

Essential technologies include:

- IoT Sensors: Real-time data collection

- Cloud Computing: Scalable processing and storage

- AI/ML: Predictive analytics and optimization

- 3D Modeling: Visual representation and simulation

The digital twin data pipeline involves:

- Data Collection: Sensors gather real-time physical data

- Data Integration: Multiple data sources combined

- Model Updating: Digital replica updated continuously

- Analysis: Patterns and insights extracted

- Prediction: Future behavior forecasting

- Optimization: Recommendations for improvement

This pipeline enables real-time monitoring and predictive capabilities.

Key areas where digital twins are transforming industries:

- Manufacturing: Predictive maintenance, quality optimization

- Healthcare: Patient monitoring, surgical simulation

- Smart Cities: Traffic management, infrastructure monitoring

- Aviation: Aircraft maintenance, flight optimization

- Energy: Grid optimization, equipment monitoring

- Automotive: Vehicle testing, fleet management

Digital Twin Fundamentals

Virtual replica, real-time synchronization, predictive analytics, IoT integration, 3D modeling.

\(\text{Accuracy} = \frac{\text{Correct Predictions}}{\text{Total Predictions}} \times 100\%\)

Where accuracy represents the fidelity of the digital twin to the physical entity.

- Digital twins must maintain real-time synchronization

- Accuracy degrades without proper data updates

- Security is critical for both physical and digital assets

Applications

Manufacturing, healthcare, smart cities, aviation, energy, automotive.

- Production optimization

- Predictive maintenance

- Quality assurance

- Resource management

- Data privacy and security concerns

- Initial setup and maintenance costs

- Integration complexity with legacy systems

Digital Twin Technology Learning Quiz

Which of the following is NOT a core component of a digital twin system?

The three core components of a digital twin system are: the Physical Entity (real-world object), the Digital Replica (virtual model), and the Connection Layer (real-time data synchronization). While blockchain technology can enhance security and traceability in digital twin systems, it is not a fundamental architectural component. The essential elements focus on the relationship between physical and digital representations and their continuous synchronization.

The answer is D) Blockchain Verification.

Understanding the core architecture of digital twins is essential because it defines the fundamental relationship that enables all other capabilities. The physical entity provides the real-world data, the digital replica creates the virtual model, and the connection layer ensures they stay synchronized. This triad forms the foundation for all advanced features like predictive analytics and optimization. Additional technologies like blockchain can enhance digital twins but are not part of the basic architecture.

Digital Twin: Virtual replica of physical entity

Physical Entity: Real-world object being mirrored

Connection Layer: Real-time data synchronization mechanism

• All three core components must work together

• Real-time synchronization is essential

• Accuracy depends on data quality

• Remember the physical-digital-connection triad

• Data quality directly impacts twin accuracy

• Latency affects synchronization effectiveness

• Confusing digital twin with simple 3D modeling

• Thinking static models qualify as digital twins

• Ignoring the real-time synchronization requirement

Explain the role of IoT sensors in digital twin systems. How do they contribute to the accuracy and functionality of digital twins?

Role of IoT Sensors: IoT sensors serve as the sensory organs of digital twin systems, continuously collecting real-time data from the physical entity. They measure parameters like temperature, pressure, vibration, location, and other relevant metrics.

Contribution to Accuracy: The quality and quantity of sensor data directly impacts digital twin accuracy. More sensors provide comprehensive coverage, while high-quality sensors ensure reliable measurements. The frequency of data collection affects the temporal resolution of the digital twin.

Functionality Enhancement: Sensors enable real-time monitoring, predictive analytics, and anomaly detection. They provide the continuous feedback loop necessary for the digital twin to remain synchronized with the physical entity.

Data Processing: Raw sensor data undergoes filtering, calibration, and fusion to create accurate inputs for the digital twin model, ensuring the virtual representation accurately reflects the physical state.

Think of IoT sensors as the nervous system of the digital twin - they're constantly gathering information about the physical entity's condition and transmitting it to the digital replica. Without this continuous flow of data, the digital twin would quickly become outdated and inaccurate. The more comprehensive and frequent the sensor data, the more faithfully the digital twin can mirror the physical entity. This is why sensor placement, quality, and calibration are critical factors in digital twin success.

IoT Sensors: Devices that collect real-time physical data

Temporal Resolution: Frequency of data collection

Data Fusion: Combining multiple sensor inputs

• Sensor placement affects coverage completeness

• Data quality directly impacts twin accuracy

• Calibration ensures measurement reliability

• Place sensors at critical monitoring points

• Use redundant sensors for critical measurements

• Regular calibration maintains accuracy

• Underestimating sensor placement importance

• Not calibrating sensors regularly

• Ignoring sensor data quality issues

A manufacturing company wants to implement a digital twin for a production line with 100 machines. Each machine requires 10 sensors reporting data every second. Calculate the total data rate generated and estimate the storage requirements for one month of operation. If the company has a 1 Gbps network, what percentage of bandwidth will be used for the digital twin system assuming each sensor generates 1 KB of data per reading?

Total Data Rate Calculation:

Per machine per second: 10 sensors × 1 KB = 10 KB

Total for 100 machines: 100 × 10 KB = 1,000 KB/s = 1 MB/s

Monthly Storage Requirements:

Per day: 1 MB/s × 86,400 s = 86,400 MB = 86.4 GB

Per month (30 days): 86.4 GB × 30 = 2,592 GB = 2.59 TB

Network Bandwidth Usage:

Digital twin traffic: 1 MB/s = 8 Mbps

Percentage of 1 Gbps: (8 Mbps ÷ 1000 Mbps) × 100% = 0.8%

The digital twin system would use only 0.8% of the available bandwidth while requiring 2.59 TB of storage monthly.

This problem illustrates the data scale challenges of digital twins. While 100 machines with 10 sensors each might seem like a lot, the actual network impact is quite manageable at only 0.8% of a 1 Gbps connection. However, the storage requirements grow significantly over time, reaching nearly 2.6 TB per month. This demonstrates why data compression, edge processing, and selective data retention strategies are important in digital twin implementations. The key insight is that while the real-time data flow is moderate, the accumulated historical data can be substantial.

Data Rate: Amount of data transmitted per unit time

Storage Requirements: Total data volume over time periodBandwidth Usage: Percentage of network capacity utilized

• Real-time data rates are typically moderate

• Historical storage grows over time

• Edge processing reduces network load

• Compress data to reduce storage needs

• Use edge computing to pre-process data

• Implement data retention policies

• Underestimating long-term storage requirements

• Not accounting for data redundancy

• Ignoring network overhead

Design a predictive maintenance system using digital twins for an industrial facility with 50 critical machines. Identify the key parameters to monitor, explain how the digital twin predicts failures, and calculate the potential cost savings if the system prevents 20% of unplanned downtime events.

Key Parameters to Monitor:

1. Vibration Analysis: Detects mechanical wear and misalignment

2. Temperature Monitoring: Identifies overheating components

3. Voltage/Current: Monitors electrical health

4. Pressure Levels: Tracks hydraulic/pneumatic systems

5. Acoustic Emissions: Detects unusual sounds indicating wear

Prediction Mechanism: The digital twin compares real-time sensor data against historical patterns and failure signatures. Machine learning algorithms analyze trends, identify anomalies, and predict when components are likely to fail based on degradation patterns.

Cost Savings Calculation: If unplanned downtime costs $100,000 per occurrence and there are typically 50 events annually, total cost = $5 million. Preventing 20% of events saves $1 million annually.

Additional benefits include reduced maintenance costs, extended equipment life, and improved safety.

Predictive maintenance using digital twins transforms reactive maintenance into proactive optimization. The digital twin continuously monitors equipment health and learns from historical data to identify patterns that precede failures. This allows maintenance teams to schedule repairs during planned downtime rather than dealing with emergency repairs. The key insight is that small deviations from normal operation can indicate significant problems developing, and the digital twin amplifies these subtle signals to predict failures weeks or months in advance.

Predictive Maintenance: Maintenance based on predicted failure timing

Degradation Patterns: Gradual decline indicators before failure

Failure Signatures: Characteristic patterns before equipment failure

• Early detection prevents catastrophic failures

• Historical data improves prediction accuracy

• Maintenance timing is critical for ROI

• Focus on critical equipment first

• Combine multiple sensor types for accuracy

• Continuously update failure models

• Monitoring too many parameters without focus

• Not validating prediction accuracy

• Ignoring false positive alarms

Which of the following represents the most significant challenge in implementing digital twin technology?

While all aspects of digital twin implementation present challenges, integrating with existing systems is often the most significant obstacle. Organizations typically have legacy systems, different data formats, incompatible protocols, and established workflows that don't readily accommodate digital twin technology. This integration challenge encompasses data standardization, system compatibility, and organizational change management.

The answer is C) Integrating with existing systems.

The integration challenge is multifaceted - it includes technical aspects like connecting different data formats and protocols, but also organizational challenges like changing established workflows and getting buy-in from different departments. While creating accurate models and collecting data are important, these are often more straightforward technical problems. Integration requires coordination across multiple systems, teams, and sometimes corporate cultures, making it the most complex aspect of digital twin implementation.

System Integration: Connecting digital twin with existing infrastructure

Legacy Systems: Older technology platforms

Data Standardization: Converting to consistent formats

• Integration requires cross-functional collaboration

• Legacy systems often lack standard APIs

• Change management is essential for success

• Start with pilot projects to prove integration

• Use middleware solutions for compatibility

• Engage all stakeholders early in process

• Underestimating integration complexity

• Not involving IT teams early enough

• Ignoring organizational resistance

FAQ

Q: How is a digital twin different from a regular computer model?

A: The key difference is real-time connection and synchronization. A regular computer model is static - it represents a system at a particular point in time. A digital twin is continuously updated with real-time data from the physical system it represents, creating a living, dynamic replica that mirrors the current state of the physical entity.

Regular models are typically used for design and planning, while digital twins enable ongoing monitoring, prediction, and optimization. Digital twins also incorporate historical data and predictive algorithms to forecast future behavior, which static models cannot do.

Q: What are the main business benefits of digital twins?

A: Digital twins offer several key business benefits:

Operational Efficiency: Real-time monitoring and optimization reduce waste and improve productivity.

Predictive Maintenance: Reduces unexpected downtime and extends equipment life by predicting failures before they occur.

Cost Reduction: Optimized processes and reduced maintenance costs lead to significant savings.

Improved Quality: Continuous monitoring helps identify quality issues early in production.

Innovation Acceleration: Safe virtual testing environment for new processes and products.

However, businesses must consider implementation costs, data security, and staff training requirements when evaluating ROI.