What is Edge Computing?

Complete guide • Architecture • Applications • Examples

Edge Computing Fundamentals:

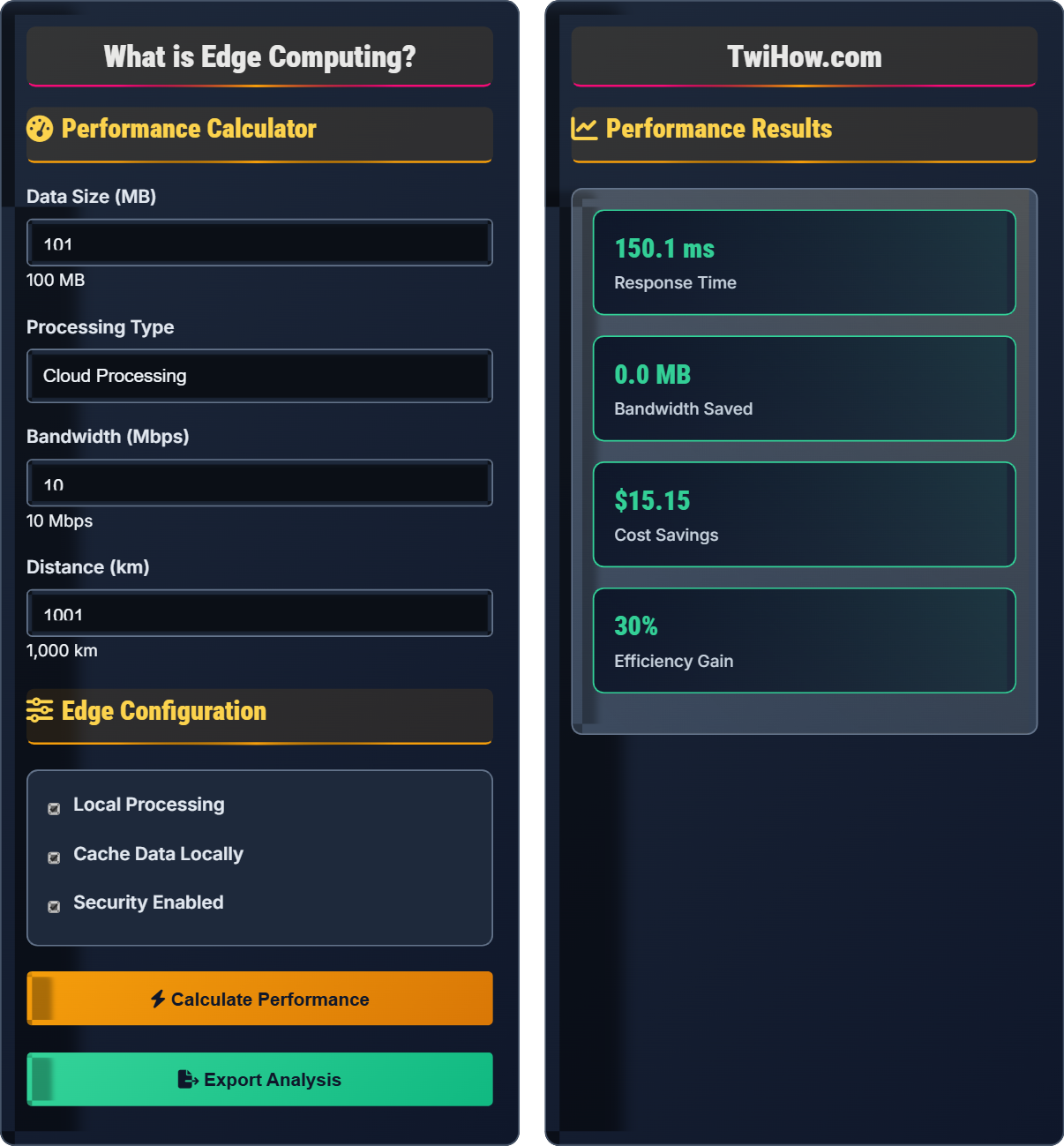

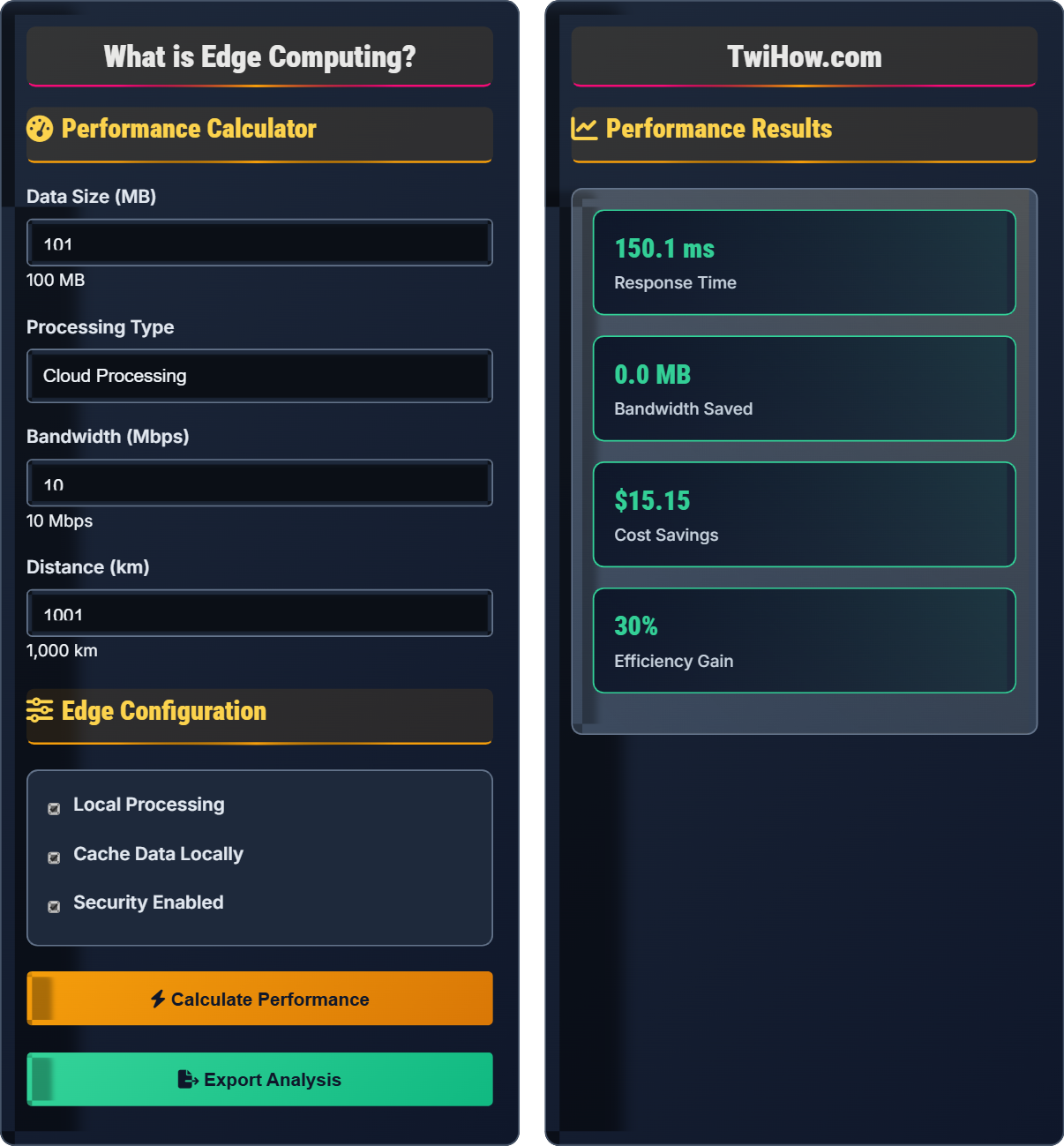

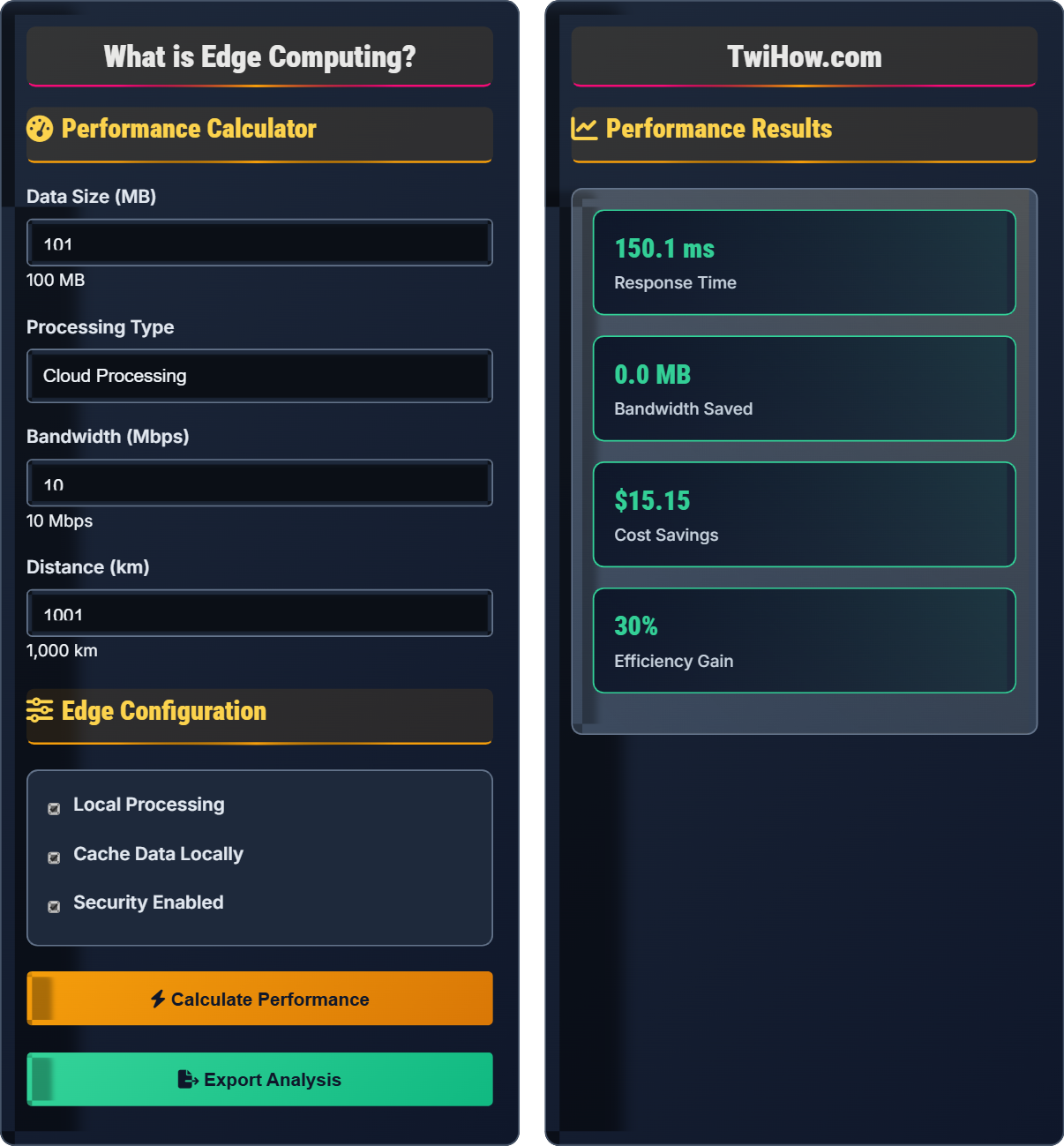

Analyze PerformanceEdge computing brings computation and data storage closer to devices that generate and consume data. This reduces latency, saves bandwidth, and enables real-time processing for applications that require immediate responses.

Key edge computing benefits:

- Reduced Latency: Processing happens near data source

- Bandwidth Savings: Less data sent to central servers

- Improved Reliability: Local processing during network issues

- Enhanced Security: Sensitive data processed locally

- Real-time Processing: Immediate response to events

Edge computing complements cloud computing by processing time-sensitive data locally while using cloud for complex analytics and long-term storage.

Performance Calculator

Edge Configuration

Performance Results

Edge Computing Concepts

Edge computing is a distributed computing paradigm that brings computation and data storage closer to the location where it's needed, to improve response times and save bandwidth. Instead of processing data in distant cloud servers, edge computing processes it locally on devices or nearby edge servers.

Core Principles: Proximity, Low latency, Bandwidth efficiency, Security.

Edge computing architecture includes multiple layers: end devices (IoT sensors, smartphones), edge gateways, edge clouds, and central clouds. Each layer processes data at different levels of complexity and proximity to the data source.

Key Components: Edge devices, Gateways, Micro data centers, Orchestration platforms.

\( \text{Latency} = \text{Transmission Time} + \text{Processing Time} + \text{Propagation Delay} \)

Edge computing and cloud computing serve different purposes. Edge computing processes data locally for immediate responses, while cloud computing handles complex analytics and long-term storage. They complement each other in a hybrid architecture.

Edge Benefits: Low latency, bandwidth efficiency, local processing.

Cloud Benefits: Scalability, complex processing, global access.

| Aspect | Edge Computing | Cloud Computing |

|---|---|---|

| Latency | Very Low (ms) | Higher (100s of ms) |

| Bandwidth Usage | Minimal | High |

| Processing Power | Limited | High |

| Data Storage | Local | Centralized |

| Security | Local control | Provider security |

Edge Computing Architecture

Edge computing works by placing computing resources closer to data sources. Data is processed locally when immediate response is needed, while complex analytics and long-term storage occur in the cloud. This creates a balanced architecture that optimizes for both performance and capability.

Real-World Applications

Self-driving cars need immediate responses to sensor data. Edge computing enables real-time decision-making for safety-critical operations like collision avoidance and traffic navigation.

Requirements: Millisecond latency, high reliability

Medical devices like pacemakers and monitors require immediate response to patient data. Edge computing ensures critical health data is processed locally without network delays.

Requirements: Ultra-low latency, data privacy

Manufacturing equipment requires real-time monitoring and control. Edge computing enables predictive maintenance and process optimization without cloud dependency.

Requirements: Real-time processing, reliability

AR applications need low-latency processing for smooth user experience. Edge computing reduces delay between user actions and visual responses.

Requirements: Low latency, high bandwidth

Edge Technologies

Specialized hardware designed for edge computing including IoT sensors, edge servers, and gateways. These devices have limited processing power but are optimized for specific tasks.

Examples: Raspberry Pi, NVIDIA Jetson, Intel NUC

5G enables ultra-low latency communications essential for edge computing. It provides the network infrastructure needed for real-time applications at the edge.

Features: 1ms latency, 10 Gbps speeds, massive IoT support

Containers like Docker and Kubernetes enable portable, lightweight applications that can run consistently across edge devices and clouds.

Benefits: Portability, scalability, resource efficiency

AI models deployed at the edge enable real-time inference without cloud dependency. This is crucial for applications requiring immediate AI responses.

Examples: TensorFlow Lite, ONNX Runtime, OpenVINO

Edge Computing Knowledge Quiz

What is the primary advantage of edge computing over cloud computing for time-sensitive applications?

The primary advantage of edge computing is reduced latency. By processing data closer to its source, edge computing minimizes the time required for data transmission and processing, enabling faster response times for time-sensitive applications like autonomous vehicles, industrial control systems, and real-time analytics.

The answer is B) Reduced latency and faster response times.

Latency is the time delay between sending a request and receiving a response. In edge computing, this delay is minimized because data doesn't need to travel to distant cloud servers. This is crucial for applications where milliseconds matter, such as autonomous driving or medical monitoring systems.

Latency: Time delay between request and response

Edge Computing: Processing near data source

Real-time: Immediate response to events

• Distance equals latency

• Proximity improves response time

• Time-sensitive requires edge

• Think about time requirements

• Consider distance to cloud

• Evaluate real-time needs

• Confusing with cloud computing

• Not understanding latency benefits

• Ignoring time requirements

Explain the difference between edge computing, fog computing, and cloud computing, and describe when each approach is most appropriate.

Cloud Computing: Centralized processing in remote data centers. Best for complex analytics, large-scale storage, and applications that don't require immediate response.

Fog Computing: Intermediate layer between cloud and edge devices. Processes data in local area networks, closer than cloud but farther than edge. Good for regional processing and coordination.

Edge Computing: Processing occurs directly on or near the device generating data. Ideal for real-time applications requiring minimal latency.

When to Use: Cloud for complex analytics, Fog for regional coordination, Edge for real-time responses.

These computing paradigms form a hierarchy based on proximity to data sources. Cloud is farthest (centralized), edge is closest (on-device), and fog sits in between (local network). The choice depends on latency requirements, processing complexity, and data sensitivity.

Cloud: Centralized remote computing

Fog: Intermediate network computing

Edge: Local device computing

• Match proximity to requirements

• Consider complexity needs

• Evaluate security requirements

• Use hybrid approaches

• Consider cost trade-offs

• Plan for scalability

• Using wrong paradigm for needs

• Not considering hybrid solutions

• Ignoring cost implications

You're designing an IoT system for a smart factory with 1000 sensors monitoring temperature, pressure, and vibration. The system must detect anomalies within 10 milliseconds to prevent equipment damage. Design an edge computing architecture that meets these requirements and explain your choices.

Architecture Design:

• Edge Nodes: Deploy edge computing nodes every 50-100 sensors

• Local Processing: Each node runs anomaly detection algorithms

• Real-time Analysis: Process data within 1-2ms locally

• Cloud Coordination: Send aggregated data to cloud for long-term analysis

Implementation:

• Use industrial-grade edge servers (Intel NUC, NVIDIA Jetson)

• Deploy lightweight ML models for anomaly detection

• Implement redundant nodes for reliability

• Use 5G or fiber for communication between nodes

Benefits: Meets 10ms requirement, reduces network load, ensures local reliability.

This example demonstrates how edge computing enables industrial IoT applications that require immediate responses. The key is distributing processing power close to data sources while maintaining coordination with centralized systems for long-term analysis.

Industrial IoT: IoT applications in manufacturing

Real-time Processing: Immediate data analysis

Redundancy: Backup systems for reliability

• Meet latency requirements

• Ensure system reliability

• Balance local and cloud processing

• Use appropriate hardware

• Optimize algorithms for edge

• Plan for failover scenarios

• Not meeting latency requirements

• Insufficient redundancy

• Poor algorithm optimization

Design an edge computing security strategy for a healthcare system that processes patient data locally on edge devices. How would you ensure data privacy, device security, and compliance with HIPAA regulations?

Security Strategy:

• Data Encryption: Encrypt all data at rest and in transit using AES-256

• Device Authentication: Use certificate-based authentication for all edge devices

• Access Control: Implement role-based access control with multi-factor authentication

• Local Processing: Keep sensitive data on-device when possible

Compliance Measures:

• Audit logs for all data access and processing

• Regular security assessments and penetration testing

• Secure boot and firmware integrity checks

• Data minimization and anonymization where possible

Implementation: Use hardware security modules, secure enclaves, and zero-trust architecture.

Healthcare edge computing requires balancing security, privacy, and performance. The distributed nature of edge computing creates more attack surfaces, requiring comprehensive security strategies that address both hardware and software vulnerabilities while maintaining regulatory compliance.

HIPAA: Health Information Privacy Act

Zero-trust: Security model assuming no inherent trust

Hardware Security Module: Physical device for secure processing

• Encrypt all sensitive data

• Authenticate all devices

• Maintain audit trails

• Use hardware security features

• Regular security updates

• Compliance monitoring

• Insufficient device authentication

• Poor encryption implementation

• Not maintaining compliance

Which of the following is NOT a primary performance benefit of edge computing?

Edge computing does not increase the processing power of individual devices. In fact, edge devices often have limited processing power compared to cloud servers. The benefit is reducing the distance data travels, not increasing local processing capability. The other options are genuine benefits of edge computing.

The answer is C) Increased processing power per device.

Edge computing's advantage is not in raw processing power but in proximity and local processing. Edge devices trade processing power for reduced latency and bandwidth efficiency. The benefit comes from not having to send data to distant servers, not from having more powerful local hardware.

Latency: Time delay in data transmission

Bandwidth: Data transmission capacity

Proximity: Physical closeness to data source

• Edge = proximity, not power

• Latency vs processing trade-off

• Bandwidth efficiency

• Optimize for latency, not power

• Consider data aggregation

• Plan for limited resources

• Expecting cloud-level power at edge

• Not optimizing for limited resources

• Ignoring latency benefits

FAQ

Q: How does edge computing relate to 5G networks?

A: 5G and edge computing are complementary technologies. 5G provides the ultra-low latency and high bandwidth needed for edge applications, while edge computing reduces the load on 5G networks by processing data locally. Together, they enable applications like autonomous vehicles, AR/VR, and industrial automation that require millisecond response times.

Q: Can edge computing replace cloud computing?

A: No, edge computing complements rather than replaces cloud computing. Edge handles time-sensitive, local processing while cloud manages complex analytics, long-term storage, and global coordination. The future is hybrid architectures that leverage both technologies based on application requirements.